Blktap

Contents

blktap Overview

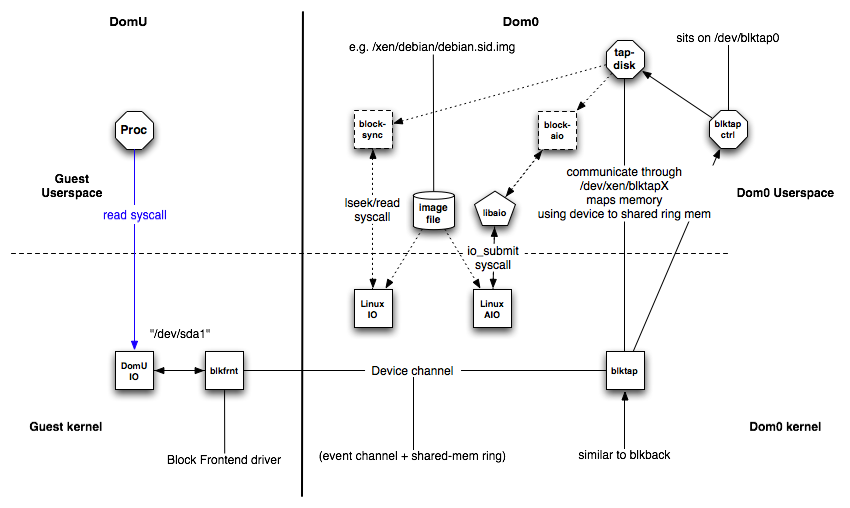

The blktap 'block tap userspace toolkit provides a user-level disk I/O interface. Its current main application is to replace the common loopback driver for file-based images because of better performance. The blktap mechanism involves a kernel driver running in Dom0 that acts similarly to the existing Xen/Linux blkback driver, and a set of associated user-level libraries. Using these tools, blktap allows virtual block devices presented to VMs to be implemented in userspace and to be backed by raw partitions, files, network, etc. [ 1 ]

Advantages

The key benefit of blktap is that it makes it easy and fast to write arbitrary block backends, and that these user-level backends actually perform very well. Specifically:

- Metadata disk formats such as Copy-on-Write, encrypted disks, sparse formats and other compression features can be easily implemented.

- Accessing file-based images from userspace avoids problems related to flushing dirty pages which are present in the Linux loopback driver. (Specifically, doing a large number of writes to an NFS-backed image don't result in the OOM killer going berserk.)

- Per-disk handler processes enable easier userspace policing of block resources, and process-granularity QoS techniques (disk scheduling and related tools) may be trivially applied to block devices.

- It's very easy to take advantage of userspace facilities such as networking libraries, compression utilities, peer-to-peer file-sharing systems and so on to build more complex block backends.

- Crashes are contained -- incremental development/debugging is very fast.

Using the Tools

Prepare the image for booting. For qcow files use the qcow utilities installed earlier (also from the tools/blktap/ dir). qcow-create for example generates a blank standalone image or a file-backed CoW image. img2qcow takes an existing image or partition and creates a sparse, standalone qcow-based file.

The userspace disk agent blktapctrl is configured to start automatically via xend. Alternatively you can start it manually

blktapctrl

Customise the VM config file to use the 'tap' handler, followed by the driver type. e.g. for a raw image such as a file or partition:

disk = ['tap:aio:<FILENAME>,sda1,w']

e.g. for a qcow image:

disk = ['tap:qcow:<FILENAME>,sda1,w']

The following disk types are currently supported:

- Raw Images (both on partitions and in image files)

- File-backed Qcow disks

- Standalone sparse Qcow disks

- Fast shareable RAM disk between VMs (requires some form of cluster-based filesystem support e.g. OCFS2 in the guest kernel)

- Some VMDK images - your mileage may vary

Raw and QCow images have asynchronous backends and so should perform fairly well. VMDK is based directly on the qemu vmdk driver, which is synchronous (a.k.a. slow).

Mounting images in Dom0 using the blktap driver

Tap (and blkback) disks are also mountable in Dom0 without requiring an active VM to attach. You will need to build a xenlinux Dom0 kernel that includes the blkfront driver (e.g. the default 'make world' or 'make kernels' build. Simply use the xm command-line tool to activate the backend disks, and blkfront will generate a virtual block device that can be accessed in the same way as a loop device or partition:

e.g. for a raw image file <FILENAME> that would normally be mounted using the loopback driver like:

mount -o loop <FILENAME> /mnt/disk

, do the following:

xm block-attach 0 tap:aio:<FILENAME> /dev/xvda1 w 0 mount /dev/xvda1 /mnt/disk

Don't use the loop driver when mounting!

In this way, you can use any of the userspace device-type drivers built with the blktap userspace toolkit to open and mount disks such as qcow or vmdk images:

xm block-attach 0 tap:qcow:<FILENAME> /dev/xvda1 w 0 mount /dev/xvda1 /mnt/disk

How it works

Short Description

Working in conjunction with the kernel blktap driver, all disk I/O requests from VMs are passed to the userspace daemon (using a shared memory interface) through a character device. Each active disk is mapped to an individual device node, allowing per-disk processes to implement individual block devices where desired. The userspace drivers are implemented using asynchronous (Linux libaio), O_DIRECT-based calls to preserve the unbuffered, batched and asynchronous request dispatch achieved with the existing blkback code. We provide a simple, asynchronous virtual disk interface that makes it quite easy to add new disk implementations.

Detailed Description

Compononents

blktap consists of the following components:

- blktap kernel driver in Dom0

- blkfront instance of the driver in DomU

- XenBus connecting blkfront and blktap

This is similar to the general XenSplitDrivers architecture. Additionally blktap has the following components in Dom0:

- /dev/blktap0, a character device in Dom0

- /dev/xen/blktapX, where X is a number which represents one particular virtual disk

- blktapctrl, a daemon running in userspace, that controls the creation of new virtual disks

- tapdisk, each tapdisk process in userspace is backed by one or several image files

- /var/run/tap/tapctrlreadX, /var/run/tap/tapctrlwriteX; named pipes used for communication between the individual tapdisk processes and blktapctrl using the write_msg and read_msg functions.

- A frontend ring (fe_ring) for mapping the data of the I/O requests from the Guest VM to the tapdisk in userspace.

- A shared ring (sring) for communication?

The Linux kernel driver in /drivers/xen/blktap/ reacts to the ioctl, poll and mmap system calls.

When xend is started the userspace daemon blktapctrl is started, too. When booting the Guest VM the XenBus is initialized as described in XenSplitDrivers. The request for a new virtual disk is propagated to blktapctrl, which creates a new character device and two named pipes for communication with a newly forked tapdisk process.

After opening the character device the shared memory is mapped to the fe_ring using the mmap system call. The tapdisk process opens the image file and sends information about the image’s size back to blktapctrl, which stores it. After this initialization tapdisk executes a select system call on the two named pipes. On an event it checks if the tap-fd is set and if it is, tries to read a request from the frontend ring.

The XenBus connection between DomU and Dom0 is used by XenStore to negotiate the backend/frontend connection. After the setup of both backend and frontend a shared ring page and an event channel are negotiated. These are used for any further communication between backend and frontend. I/O requests issued in the Guest VM are handled in the Guest OS and forwarded using these two communication channels.

There is a trade-off between delay and throughput which is controlled by modifying the number of requests until the blktap driver is notified.

The blktap driver notifies the appropriate blktapctrl or tapdisk process depending on the event type by returning the poll and waking up the tapdisk process respectively. The shared frontend ring works as described in the ring.h.

tapdisk reads the request from the frontend ring and in case of synchronous I/O reads and immediately returns the request. In case of asynchronous I/O a batch of requests is submitted to Linux AIO subsystem. Both mechanisms read from the image file. In the asynchronous case it is checked using the non-blocking system call io_getevents if the I/O requests were completed.

The information about completed requests is propagated in the frontend ring. The blktap driver is notified by the tapdisk process with the ioctl system call.

Using the same XenSplitDevices mechanism the data is returned to the frontend of the Guest VM.

Source Code Organization

Paths for the userspace drivers and programs [[[#ref1|1]]] are relative to

tools/blktap/

The different userspace drivers are defined in the following source code files:

| Userspace driver | Type of I/O |

| Raw file/partition | asynchronous |

| Raw file/partition | synchronous |

| Ramdisk | synchronous |

| QCow images | asynchronous |

| VMware images - .vmdk files | synchronous |

Additional image- and synchronousness-independent functionality is defined in drivers/tapdisk.c.

The blktapctrl program is defined in the file drivers/blktapctrl.c.

The programs to convert from and to QCow images are defined in drivers/qcow2raw.c, drivers/qcow-create.c and drivers/img2qcow.c.

The XenBus interface for blktap is defined in lib/xenbus.c and the interface to XenStore is defined in xs_api.c.

The actual kernel driver part of blktap is typically found under:

linux-2.6-xen-sparse/drivers/xen/blktap/

The main functionality is defined in blktap.c. xenbus.c has the description of the interface to XenBus.

Sample usage

(from Jonthan Ludlam's reply to Dave Scott in xen-api maillist)

Here sample sequence of blktap usage sequence: First, allocate a minor number in the kernel: # tap-ctl allocate /dev/xen/blktap-2/tapdev4 This is the path which will be the block device later. Then, spawn a tapdisk process: # tap-ctl spawn tapdisk spawned with pid 28453 Now, you need to attach the two together: # tap-ctl attach -m 4 -p 28453 Finally, you can open a VHD: # tap-ctl open -m 4 -p 28453 -a vhd:/path/to/vhd Or perhaps you'd like to open a raw file? # tap-ctl open -m 4 -p 28453 -a aio:/path/to/raw/file Or maybe an NBD server? # tap-ctl open -m 4 -p 28453 -a nbd:127.0.0.1:8000 (For this last one to work you'll need a blktap from github/xen-org/blktap trunk-ring3 branch) You can also open a secondary to start mirroring things to: # tap-ctl open -m 4 -p 28453 -a vhd:/path/to/vhd -2 vhd:/path/to/mirror/vhd Afterwards, you'll need to tear things down. Close the tapdisk image: # tap-ctl close -m 4 -p 28453 Detach the tapdisk process from the kernel - this also kills the tapdisk process # tap-ctl detach -m 4 -p 28453 Now free the kernel minor number: # tap-ctl free -m 4 Also useful is stats querying: # tap-ctl stats -m 4 -p 28453

References

- 1: based on README file by original developer