Difference between revisions of "Xen ARM with Virtualization Extensions whitepaper"

(→Xen on ARM: code size) |

|||

| Line 29: | Line 29: | ||

== Xen on ARM: code size == |

== Xen on ARM: code size == |

||

| − | {| class="wikitable" style="text-align: center; border-style: solid; border-width: 1px 1px 1px 1px; padding: 2" |

+ | {| class="wikitable" style="margin-left: auto; margin-right: auto; text-align: center; border-color: black; border-style: solid; border-width: 1px 1px 1px 1px; padding: 2" |

| + | |style="background-color: DeepSkyBlue;"|- |

||

| − | |- |

||

| + | |style="background-color: DeepSkyBlue;"| |

||

| − | | |

||

| + | |style="background-color: DeepSkyBlue;"|Common |

||

| − | |Common |

||

| + | |style="background-color: DeepSkyBlue;"|ARMv7 |

||

| − | |ARMv7 |

||

| + | |style="background-color: DeepSkyBlue;"|ARMv8 |

||

| − | |ARMv8 |

||

| + | |style="background-color: DeepSkyBlue;"|Total |

||

| − | |Total |

||

|- |

|- |

||

|style="text-align:left;"|xen/arch/arm |

|style="text-align:left;"|xen/arch/arm |

||

Revision as of 12:37, 21 March 2014

Contents

Xen on ARM

What is Xen?

Xen is a small footprint Open Source hypervisor. Xen on ARM amounts to less than 90K lines of code. Xen is licensed GPLv2 and has an healthy and diverse community to support it and fund the development. Xen is hosted by the LinuxFoundation, that provides stewardship for the project.

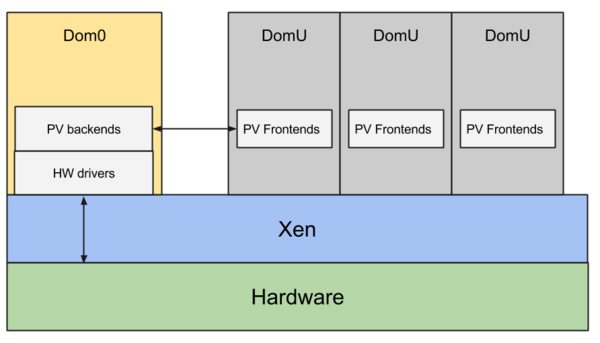

The Xen Architecture

Xen is type-1 hypervisor: it runs directly on the hardware, everything else in the system is running as a virtual machine on top of Xen, including Dom0 that is the first virtual machine created by Xen. Dom0 is privileged and drives the devices on the platform. Xen virtualizes CPU, memory, interrupts and timers, providing virtual machines with one or more virtual CPUs, a fraction of the memory of the system, a virtual interrupt controller (indistinguishable from a physical interrupt controller from the guest point of view) and a virtual timer. Xen assigns devices such as the SATA controller and network cards to Dom0, taking care of remapping MMIO regions and IRQs. Dom0 (typically Linux, but it could also be FreeBSD or other operating systems) runs the same device drivers for these devices that would be using on a native execution. Dom0 also runs a set of drivers called “paravirtualized backends” to give access to disk, network, etc, to the other unprivileged virtual machines. The operating system running as DomU (unprivileged guest in Xen terminology) gets access to a set of generic virtual devices by running the corresponding “paravirtualized frontend” drivers. A single backend services multiple frontends. A pair of paravirtualized drivers exist for all the most common classes of devices: disk, network, console, framebuffer, etc. They usually live in the operating system kernel, i.e. Linux. A few PV backends can also run in userspace in QEMU. The frontends connect to the backends using a simple shared ring protocol over a page in memory. Xen provides all the tools for discovery and to setup the initial communication. Xen also provides a mechanism for the frontend and the backend to share additional pages and notify each other via software interrupts.

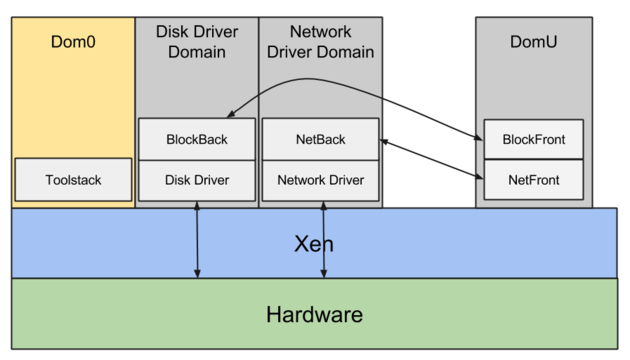

However there is no reasons to run all the device drivers and all the paravirtualized backends in Dom0. The Xen architecture allows “driver domains”: unprivileged virtual machines with the only purpose of running the driver and the paravirtualized backend for one device. For example you can have a disk driver domain, with the SATA controller assigned and running the driver for it and the disk paravirtualized backend. You can have a network driver domain with the network card assigned and running the driver for it and the network paravirtualized backend. As driver domains are just unprivileged guests, they make the system more secure because they allow large pieces of code, such as the entire network stack, to run in unprivileged mode. Even if a malicious guest manages to take over the paravirtualized network backend and the network driver domain, it wouldn’t be able to take over the entire system. Driver domains also improve isolation and resilience: the network driver domain is fully isolated from the disk driver domains and Dom0. If the network driver crashes it wouldn’t be able to take down the entire system, only the network. It is possible to reboot just the driver domains while everything else stays online. Finally driver domains allow Xen users to disaggregate and componentize the system in ways that wouldn’t be possible otherwise. For example they allow users to run a real-time operating system alongside the main OS to drive a device that has real time constraints. They allow users to run a legacy OS to drive old devices that don’t have any new drivers in modern operating systems. They allow users to separate and isolate critical functionalities from less critical ones. For example they allow to run an OS such as QNX to drive most of the devices on the platform and Android for the UI.

Xen on ARM: a cleaner architecture

Xen on ARM is not just a straight 1:1 port of x86 Xen. We exploited the opportunity to clean up the architecture and get rid of the cruft that accumulated during the many years of x86 development. Firstly we removed any need for emulation. Emulated interfaces are slow and insecure. QEMU, used for emulation on x86 Xen, is a nice and well maintained Open Source project but is big both in terms of binary size and lines of source code. In software the smaller, the simpler, the better. Xen on ARM does not need QEMU because it does not do any emulation. It accomplishes the goal by exploiting virtualization support in hardware as much as possible and using paravirtualized interfaces for IO.

On x86 two different kind of Xen guests coexist: PV guests, such as Linux and other Open Source OSes, and HVM guests, usually Microsoft Windows, but any OS can run as HVM guest. PV and HVM guests look quite different from one another from the hypervisor point of view. The difference is exposed all the way up to the user that needs to choose how to run the guest by setting a line in the VM config file. On ARM we didn’t want to introduce this differentiation that we felt was artificial and could confuse our users. Xen on ARM only supports one kind of guests that is the best of both worlds: it does not need any emulation and relies on paravirtualized interfaces for IO as early as possible in the boot sequence, like PV guests on x86. It exploits virtualization support in hardware as much as possible and does not require invasive changes in the guest operating system kernel in order to run, like HVM guests on x86.

The new architecture designed for Xen on ARM is much cleaner and simpler and it turned out to be a very good match for the hardware.

Xen on ARM: virtualization extensions

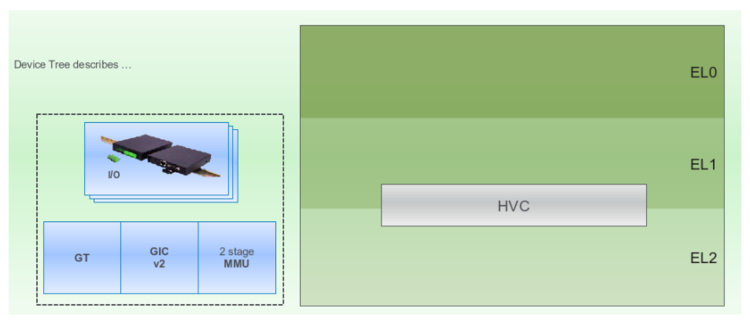

ARM provides 3 levels of execution: EL0, user mode, EL1, kernel mode, and EL2, hypervisor mode. ARM virtualization extensions introduce a new instruction, HVC, to switch between kernel mode and hypervisor mode. The MMU supports 2 stages of translation. The generic timers and the GIC interrupt controller are also virtualization aware.

Xen runs entirely and only in hypervisor mode. It leaves kernel mode for the guest operating system kernel and EL0 for guest user space applications. Type-2 hypervisors need to frequently switch between hypervisor mode and kernel mode. By running entirely in EL2 Xen significantly reduces the number of context switches required. The new instruction, HVC, is used by the kernel to issue hypercalls to the hypervisor. Xen uses second stage translation in the MMU to assign memory to virtual machines. Xen also takes over the generic timers and the GIC interrupt controller. It uses the generic timers to receive timer interrupts as well as injecting timer interrupts and exposing the counter to virtual machines. It uses the GIC to receive interrupts as well as injecting interrupts into guests.

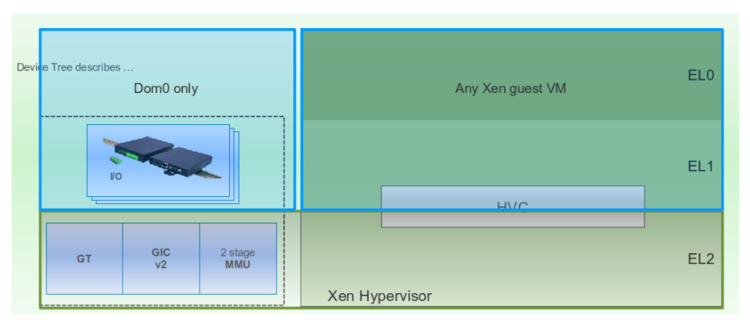

Xen discovers the hardware via device tree. It assigns all the devices that it does not use to Dom0 by remapping the corresponding MMIO regions and interrupts. It generates a flatten device tree binary for Dom0 that describes exactly the environment exposed to it: the number of virtual cpus that Xen created for it (maybe less than the number of physical cpus on the platform), the amount of memory that Xen gave to it (surely less than the amount of physical memory available) and only the devices that Xen re-assigned to it (not all devices are assigned to Dom0, at the very least one UART is not). Xen also adds a device tree node to advertise its own presence on the platform. Dom0 boots exactly the same way it would boot natively. By using device tree to discover the hardware, Dom0 finds out exactly what is available and loads the drivers for it. It does not try to access interfaces that are not present and therefore Xen does not need to do any emulation. By finding the Xen hypervisor node, Dom0 knows that it is running on Xen and therefore can initialize the paravirtualized backends. Other DomUs would load the paravirtualized frontends instead.

Xen on ARM: code size

| - | Common | ARMv7 | ARMv8 | Total | |

| xen/arch/arm | 5,122 | 1,969 | 821 | 7912 | |

| C | 5,023 | 406 | 344 | 5,773 | |

| ASM | 99 | 1,563 | 477 | 2,139 | |

| xen/include/asm-arm | 2,315 | 563 | 666 | 3,544 |

We wrote previously that the new architecture turned out to be a very good match for the hardware. This is proven by the code size: the smaller the better. Xen on ARM is 1/10 of the code size of x86_64 Xen, while still providing a similar level of features.

Porting Xen to a new SoC

In terms of devices Xen only uses the GIC, generic timers, the SMMU for device assignment and one UART for debugging. Therefore porting Xen to a new SoC is a simple task. It usually involves writing a new UART driver for Xen (if the SoC comes with an unsupported UART) and the code to bring up secondary CPUs (if the platform does not support PSCI, for which Xen has already a driver).

Porting an operating system to Xen on ARM

Porting an OS to Xen on ARM could not be easier: it does not require any changes to the operating system kernel, only few new drivers to get the paravirtualized frontends running and obtain access to network, disk, console, etc. The paravirtualized frontends rely on the grant table, for page sharing, on xenbus, for discovery and on event channels for notifications. Once the OS has support for these basic building blocks, introducing the paravirtualized frontends is easy. You are likely to be able to reuse the existing frontends in Linux (GPLv2 licensed) or the ones in FreeBSD or NetBSD (BSD licensed).