Network Throughput and Performance Guide

Contents

- 1 Introduction

- 2 Contributing

- 3 Scenarios

- 4 Technical Overview

- 5 Symptoms, probable causes, and advice

- 6 Making throughput measurements

- 7 Diagnostic tools

- 8 Recommended configurations

- 9 Tweaks

- 9.1 Automatic IRQ Balancing in Dom0

- 9.2 Manual IRQ Balancing in Dom0

- 9.3 Changing the Number of Dom0 VCPUs

- 9.4 Changing the Number of Netback Threads in Dom0

- 9.5 Enabling NIC Offloading

- 9.6 Enabling Jumbo Frames

- 9.7 Linux TCP parameter settings

- 9.8 Pinning a VM to specific CPUs

- 9.9 Switching between Linux Bridge and Open VSwitch

- 9.10 Enabling/disabling IOMMU

- 9.11 Enabling SR-IOV

- 10 Acknowledgements

Introduction

Setting up an efficient network in the world of virtual machines can be a daunting task. Hopefully, this guide will be of some help, and allow you to make good use of your network resources.

The guide applies to XCP 1.0 and later, and to XenServer 5.6 FP1 and later. Much of it is applicable to earlier versions, too.

For a general guide on XenServer network configurations, see Designing XenServer Network Configurations.

Contributing

If you would like to contribute to this guide, please submit your feedback to Rok Strniša, or get an account and edit the page yourself.

If you would like to be notified about updates to this guide, please "Create account" and "Watch" to this page.

Scenarios

There are many possible scenarios where network throughput can be relevant. The major ones that we have identified are:

- dom0 throughput The traffic is sent/received directly by `dom0`.

- single-VM throughput The traffic is sent/received by a single VM.

- multi-VM throughput The traffic is sent/received by multiple VMs, concurrently. Here, we are interested in aggregate network throughput.

- single-VCPU VM throughput The traffic is sent/received by a single-VCPU VMs.

- single-VCPU single-TCP-thread VM throughput The traffic is sent/received by a single TCP thread in single-VCPU VMs.

- multi-VCPU VM throughput The traffic is sent/received by a multi-VCPU VMs.

- network throughput for storage The traffic sent/received originates from/is stored on a storage device.

Technical Overview

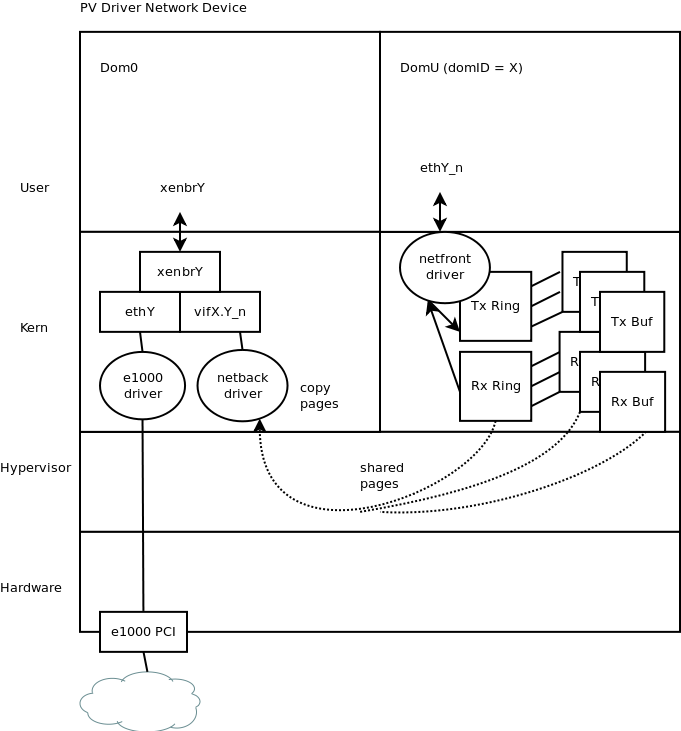

Sending network traffic to and from a VM is a fairly complex process. The figure applies to PV guests, and to HVM guests with PV drivers.

Therefore, when a process in a VM, e.g. a VM with domID equal to X, wants to send a network packet, the following occurs:

- A process in the VM generates a network packet P, and sends it to a VM's virtual network interface (VIF), e.g. ethY_n for some network Y and some connection n.

- The driver for that VIF, netfront driver, then shares the memory page (which contains the packet P) with the backend domain by establishing a new grant entry. A grant reference is part of the request pushed onto the transmit shared ring (Tx Ring).

- netfront then notifies, via an event channel (not on the diagram), one of netback threads in dom0 (the one responsible for ethY_n) where in the shared pages the packet P is stored. (XenStore is used to setup the initial connection between the front-end and the back-end, deciding on what event channel to use, and where the shared rings are.)

- netback (in dom0) fetches P, processes it, and forwards it to vifX.Y_n;

- The packet is then handed to the back-end network stack, where it is treated according to its configuration just like any other packet arriving on a network device.

When a VM is to receive a packet, the process is almost the reverse of the above. The key difference is that on receive there is a copy being made: it happens in dom0, and is a copy from back-end owned memory into a Tx Buf, which the guest has granted to the back-end domain. The grant references to these buffers are in the request on the Rx Ring (not Tx Ring).

Symptoms, probable causes, and advice

There are many potential bottlenecks. Here is a list of symptoms (and associated probable causes and advice):

- I/O is extremely slow on my Hardware Virtualised Machine (HVM), e.g. a Windows VM.

- Verifying the symptom: Compare the results of an I/O speed test on the problem VM and a healthy VM; they should be at least an order of magnitude different.

- Probable cause: The HVM does not have PV drivers installed.

- Background: With PV drivers, an HVM can make direct use of some of the underlying hardware, leading to better performance.

- Recommendation: Install PV drivers.

- VM's VCPU is fully utilised.

- Verifying the symptom: Run xentop in dom0 --- this should give a fairly good estimate of aggregate usage for all VCPUs of a VM; pressing V reveals how many seconds were spent in which VM's VCPU. Running VCPU measurement tools inside the VM does not give reliable results; they can only be used to find rough relative usage between applications in a VM.

- Background: When a VM sends or receives network traffic, it needs to do some basic packet processing.

- Probable cause: There is too much traffic for that VCPU to handle.

- Recommendation 1: Try enabling NIC offloading --- see Tweaks (below) on how to do this.

- Recommendation 2: Try running the application that does the sending/receiving of network traffic with multiple threads. This will give the OS a chance to distribute the workload over all available VCPUs.

- HVM VM's first (and possibly only) VCPU is fully utilised.

- Verifying the symptom: Same as above.

- Background: Currently, only VM's first VCPU can process the handling of interrupt requests.

- Probable cause: The VM is receiving too many packets for its current setup.

- Recommendation 1: If the VM has multiple VCPUs, try to associate application processing with non-first VCPUs.

- Recommendation 2: Use more (1 VCPU) VMs to handle receive traffic, and a workload balancer in front of them.

- Recommendation 3: If the VM has multiple VCPUs and there's no definite need for it to have multiple VCPUs, create multiple 1-VCPU VMs instead (see Recommendation 2).

- Plans for improvement: Underlying architecture needs to be improved so that VM's non-first VCPUs can process interrupt requests.

- In dom0, a high percentage of a single VCPU is spent processing system interrupts.

- Verifying the symptom: Run top in dom0, then press z (for colours) and 1 (to show VCPU breakdown). Check if there is a high value for si for a single VCPU.

- Background: When packets are sent to a VM on a host, its dom0 needs to process interrupt requests associated with the interrupt queues that correspond to the device the packets arrived on.

- Probable cause: dom0 is set up to process all interrupt requests for a specific device on a specific dom0 VCPU.

- Recommendation 1: Check in /proc/interrupts whether your device exposes multiple interrupt queues. If the device supports this feature, make sure that it is enabled.

- Recommendation 2: If the device supports multiple interrupt queues, distribute the processing of them either automatically (by using irqbalance daemon), or manually (by setting /proc/irq/<irq-no>/smp_affinity) to all (or a subset of) dom0 VCPUs.

- Recommendation 3: Otherwise, make sure that an otherwise relatively-idle dom0 VCPU is set to process the interrupt queue (by manually setting the appropriate /proc/irq/<irq-no>/smp_affinity).

- In dom0, a VCPU is fully occupied with a netback process.

- Verifying the symptom: Run top in dom0. Check if there is a netback process, which appears to be taking almost 100%. Then, run xentop in dom0, and check VCPU usage for dom0: if it reads about 120% +/- 20% when there is no other significant process in dom0, then there's a high chance that you have confirmed the symptom.

- Background: When packets are sent from or to a VM on a host, the packets are processed by a netback process, which is dom0's side of VM network driver (VM's side is called netfront).

- General Recommendation: Try enabling NIC offloading --- see Tweaks (below) on how to do this.

- Possible cause 1: VMs' VIFs are not correctly distributed over the available netback threads.

- Recommendation: Read the related KB article.

- Possible cause 2: Too much traffic is being sent of a single VIF.

- Recommendation: Create another VIF for the corresponding VM, and setup the application(s) within the VM to send/receive traffic over both VIFs. Since each VIF should be associated with a different netback process (each of which is linked to a different dom0 VCPU), this should remove the associated dom0 bottleneck. If every dom0 netback thread is taking 100% of a dom0 VCPU, increase the number of dom0 VCPUs and netback threads first --- see Tweaks (below) on how to do this.

- There is a VCPU bottleneck either in a dom0 or in a VM, and I have control over both the sending and the receiving side of the network connection.

- Verifying the symptom: (See notes about xentop and top above.)

- Background: (Roughly) Each packet generates an interrupt request, and each interrupt request requires some VCPU capacity.

- Recommendation: Enable Jumbo Frames (see Tweaks (below) for more information) for the whole connection. This should decrease the number of interrupts, and therefore decrease the load on the associated VCPUs (for a specific amount of network traffic).

- There is obviously no VCPU bottleneck either in a dom0 or in a VM --- why is the framework not making use of the spare capacity?

- Verifying the symptom: (See notes about xentop and top above.)

- Background: There are many factors involved when doing network performance, and many more when using virtual machines.

- Possible cause 1: Part of the connection has reached its physical throughput limit.

- Recommendation 1: Verify that all network components in the connection path physically support the desired network throughput.

- Recommendation 2: If a physical limit has been reached for the connection, add another network path, setup appropriate PIFs and VIFs, and configure the application(s) to use both/all paths.

- Possible cause 2: Some parts of the software associated with network processing might not be completely parallelisable, or the hardware cannot make use of its parallelisation capabilities if the software doesn't follow certain patterns of behaviour.

- Recommendation 1: Setup the application used for sending or receiving network traffic to use multiple threads. Experiment with the number of threads.

- Recommendation 2: Experiment with the TCP parameters, e.g. window size and message size --- see Tweaks (below) for recommended values.

- Recommendation 3: If IOMMU is enabled on your system, try disabling it. See Tweaks for a section on how to disable IOMMU.

- Recommendation 4: Try switching the network backend. See the Tweaks section on how do that.

Making throughput measurements

When making throughput measurements, it is a good idea to start with a simple environment. For example, if testing VM-level receive throughput, try sending traffic from a bare-metal (Linux) host to VM(s) on another (XCP/XenServer) host, and vice-versa when testing VM-level transmit throughput. Transmitting traffic is less demanding on the resources, and is therefore expected to produce substantially better results.

The following sub-sections provide more information about how to use some of the more common network performance tools.

Iperf 2.0.5

Installation

Linux

Make sure the following packages are installed on your system: gcc, g++, make, and subversion.

Iperf can be installed from Iperf's SVN repository:

svn co https://iperf.svn.sourceforge.net/svnroot/iperf iperf cd iperf/trunk ./configure make make install cd iperf --version # should mentioned pthreads

You might also be able to install it via a package manager, e.g.:

apt-get install iperf

When using the yum package manager, you can install it via RPMForge.

Windows

You can use the following executable: iperf.exe

Note that we are not the authors of the above executable. Please use your anti-virus software to scan the file before using it.

Usage

We recommend the following usage of iperf:

- make sure that firewall is disabled/allows iperf traffic;

- set iperf what units to report the results in, e.g. by using -f m --- if not set explicitly, iperf will change units based on the result;

- an iperf test should last at least 20 seconds, e.g. -t 20;

- experiment with multiple communication threads, e.g. -P 4;

- repeat a test in a specific context at least 5 times, calculating an average, and making notes of any anomalies;

- experiment with TCP window size and buffer size settings --- using -w 256K -l 256K for both the receiver and the sender worked well for us;

- use a shell/batch script to start multiple iperf processes simultaneously (if required), and possibly to automate the whole testing process.

- when running iperf on a Windows VM:

- run it in non-daemon mode on the receiver, since daemon mode tends to (it's still unclear as to when exactly) create a service. Having an iperf service is undesirable, since one cannot as easily control which VCPU it executes on, and with what priority. Also, you cannot have multiple receivers with a service running (in case you wanted to experiment with them);

- run iperf with "realtime" priority, and on a non-first VCPU (if you are executing on a multi-VCPU VM) for reasons explained in the section above.

Here are the simplest commands to execute on the receiver, and then the sender:

# on receiver iperf -s -f m -w 256K -l 256K # on sender iperf -c <receiver-IP> -f m -w 256K -l 256K -t 20

To measure aggregate receive throughput of multiple VMs where the data is sent from a single source (e.g., a different physical machine), use:

#!/bin/bash

VMS=$1

THREADS=$2

TIME=$3

TMP=`mktemp`

for i in `seq $VMS`; do

VM_IP="192.168.1.$i" # use your IP scheme here

echo "Starting iperf for $VM_IP ..."

iperf -c $VM_IP -w 256K -l 256K -t $TIME -f m -P $THREADS | grep -o "[0-9]\+ Mbits/sec" | awk -vn=$i '{print n, $1}' >> $TMP &

done

sleep $((TIME + 3))

cat $TMP | sort

cat $TMP | awk '{sum+=$2}END{print "Average: ", sum}'

rm -rf $TMP

Netperf 2.5.0

netperf's TCP_STREAM test also tends to give reliable results. However, since this version (the only version we recommend using) does not automatically parallelise over the available VCPUs, such parallelisation needs to be done manually in order to make better use of the available VCPU capacity.

Installation

Linux

Make sure the following packages are installed on your system: gcc, g++, make, and wget.

Then run the following commands:

wget ftp://ftp.netperf.org/netperf/netperf-2.5.0.tar.gz tar xzf netperf-2.5.0.tar.gz cd netperf-2.5.0 ./configure make make check make install

The receiver side can then be stated manually with netserver, or you can configure it as a service:

# these commands may differ depending on your OS echo "netperf 12865/tcp" >> /etc/services echo "netperf stream tcp nowait root /usr/local/bin/netserver netserver" >> /etc/inetd.conf /etc/init.d/openbsd-inetd restart

Windows

You can use the following executables:

Note that we are not the authors of the above executables. Please use your anti-virus software to scan the files before using them.

Usage

Here, we describe the usage of the Linux version of Netperf. The syntax for the Windows version is sometimes different; please see netclient.exe -h for more information.

With netperf installed on both sides, the following script can be used on either side to determine network throughput for transmitting traffic:

#!/bin/bash

THREADS=$1

TIME=$2

DST=$3

TMP=`mktemp`

for i in `seq $THREADS`; do

netperf -H $DST -t TCP_STREAM -P 0 -c -l $TIME >> $TMP &

done

sleep $((TIME + 3))

cat $TMP | awk '{sum+=$5}END{print sum}'

rm $TMP

NTttcp (Windows only)

The program can be installed by running this installer: NTttcp.msi

Note that we are not the authors of the above installer. Please use your anti-virus software to scan the file before using it.

After completing the installation, go to the installation directory, and make two copies of ntttcp.exe:

- ntttcpr.exe --- use for receiving traffic

- ntttcps.exe --- use for sending traffic

For usage guidelines, please refer to the guide in the installation directory.

Diagnostic tools

There are many diagnostic tools one can use:

- Performance tab in VM's Task Manager;

- Performance tab for the VM in XenCenter;

- Performance tab for the VM's host in XenCenter;

- top (with z and 1 pressed) in VM's host's dom0; and,

- xentop in VM's host's dom0.

It is sometimes also worth observing /proc/interrupts in dom0, as well as /proc/irq/<irqno>/smp_affinity.

Recommended configurations

When reading this section, please see the Tweaks below it for reference.

CPU bottleneck

All network throughput tests were, in the end, bottlenecked by VCPU capacity. This means that machines with better physical CPUs are expected to achieve higher network throughputs for both dom0 and VM tests.

Number of VM pairs and threads

If one is interested in achieving a high aggregate network throughput of VMs on a host, it is crucial to consider both the number of VM pairs and the number of network transmitting/receiving threads in each VM. Ideal values for these numbers vary from OS to OS due to different networking stack implementations, so some experimentation is recommended --- finding a good balance can have a drastic effect on network performance (mainly due to better VCPU utilisation). Our research shows that 8 pairs with 2 iperf threads per pair works well for Debian-based Linux, while 4 pairs with 8 iperf threads per pair works well for Windows 7.

Allocation of VIFs over netback threads

All results above assume equal distribution of used VIFs over available netback threads, which may not always be possible --- see a KB article for more information. For VM network throughput, it is important to get as close as possible to equal distribution in order to make efficient use of the available VCPUs.

Using irqbalance

The irqbalance daemon is enabled by default. It has been observed that this daemon can improve VM network performance by about 16% --- note that this is much less than the potential gain of the getting the other points described in this section right. The reason why irqbalance can help is that it distributes the processing of dom0-level interrupts across all available dom0 VCPUs, not just the first one.

Optimising Windows VMs (and other HVM guests)

It appears that Xen currently feeds all interrupts for a guest to the guest's first VCPU, i.e. VCPU0. Initial observations show that more CPU cycles are spent processing the interrupt requests than actually processing the received data (assuming there is no disk I/O, which is slow). This means that, on a Windows VM with 2 VCPUs, all processing of the received data should be done on the second VCPU, i.e. VCPU1: Task Manager > Processes > Select Process > Set CPU affinity > 1 --- in this case, VCPU0 will be fully used, whereas VCPU1 will probably have some spare cycles. While this is acceptable, it is more efficient to use 2 guests (1 VCPU each), which makes full use of both VCPUs. Therefore, to avoid this bottleneck altogether, one should probably use "<number of host CPUs> - 4" VMs, each with 1 VCPU, and combine their capabilities with a NetScaler Appliance.

Offloading some network processing to NICs

Network offloading is not officially supported, since there are known issues with some drivers. That said, if your NIC supports offloading, try to use it, especially Generic Receive Offload (GRO). However, please verify carefully that it works for your NIC+driver before using it in a production environment.

If performing mainly dom0-to-dom0 network traffic, turning on GRO setting for the NICs involved can be highly beneficial when combined with the irqbalance daemon (see above). This configuration can easily be combined with Open vSwitch (the default option), since the performance is either equal or faster than with a Linux Bridge. Turning on the Large Receive Offload (LRO) setting tends to, in general, decrease dom0 network throughput.

Our initial test results indicate that turning on either of the two offload settings (GRO or LRO) in dom0 can give mixed results based on the context. Feel free to experiment and let us know your findings.

Jumbo frames

Note that jumbo frames for the connection from A to B only work when every part of the connection supports (and has enabled) MTU 9000. See the Tweaks section below for information on how to enable this in some contexts.

We have observed network performance gains for VM-to-VM traffic (where VMs are on different hosts). Where the VMs were Linux PV guests, we were able to enable GRO offloading in hosts' dom0, which provided a further speedup.

Open vSwitch

In the various tests that we performed, we observed no statistically significant difference in network performance for dom0-to-dom0 traffic. We observed from 3% (Linux PV guests, no irqbalance) to about 10% (Windows HVM guests, with irqbalance) worse performance for VM-to-VM traffic.

TCP settings

Our experiments show that tweaking TCP settings inside the VM(s) can lead to substantial network performance improvements. The main reason for this is that most systems are still by default configured to work well on 100Mb/s or 1Gb/s, not 10Gb/s, NICs. The Tweaks section below contains a section about the recommended TCP settings for a VM.

Using SR-IOV

SR-IOV is currently a double-edged sword. This section explains what SR-IOV is, what are its down sides, and what its benefits.

Single Root I/O Virtualisation (SR-IOV) is a PCI device virtualisation technology that allows a single PCI device to appear as multiple PCI devices on the physical PCI bus. The actual physical device is known as a Physical Function (PF) while the others are known as Virtual Functions (VF). The purpose of this is for the hypervisor to directly assign one or more of these VFs to a Virtual Machine (VM) using SR-IOV technology: the guest can then use the VF as any other directly assigned PCI device. Assigning one or more VFs to a VM allows the VM to directly exploit the hardware. When configured, each VM behaves as though it is using the NIC directly, reducing processing overhead and improving performance.

SR-IOV can be used only with architectures that support IOMMU and NICs that support SR-IOV; there could be further compatibility constraints by the architecture or the NIC. Please contact support or ask on forums about recommended/officially supported configurations.

If your VM has an SR-IOV VF, functions that require VM mobility, for example, Live Migration, Workload Balancing, Rolling Pool Upgrade, High Availability and Disaster Recovery, are not possible. This is because the VM is directly tied to the physical SR-IOV enabled NIC VF. In addition, VM network traffic sent via an SR-IOV VF bypasses the vSwitch, so it is not possible to create ACLs or view QoS.

Our experiments show that a single-VCPU VM using SR-IOV on a modern system can (together with the usual NIC offloading features enabled) saturate (or nearly saturate) a 10Gbps connection when receiving traffic. Furthermore, the impact on dom0 is negligible.

The Tweaks section below contains a section about how to enable SR-IOV.

Tweaks

Automatic IRQ Balancing in Dom0

irqbalance is enabled by default.

If IRQ balancing service is already installed, you can enable it by running:

service irqbalance start

Otherwise, you need to install it first with:

yum --disablerepo=citrix --enablerepo=base,updates install -y irqbalance

Manual IRQ Balancing in Dom0

While irqbalance does the job in most situations, manual IRQ balancing can prove better in some situations. If we have a dom0 with 4 VCPUs, the following script disables irqbalance, and evenly distributes specific interrupt queues (1272--1279) among the available VCPUs:

service irqbalance stop for i in `seq 0 7`; do queue=$((1272 + i)); aff=$((1 << i % 4)); printf "%x" $aff > /proc/irq/$queue/smp_affinity; done

To find out how many dom0 VCPUs a host has, use cat /proc/cpuinfo. To find out what interrupt queues correspond to which interface, use `cat /proc/interrupts`.

Changing the Number of Dom0 VCPUs

To check the current number of dom0 VCPUs, run cat /proc/cpuinfo.

The desired number of dom0 VCPUs can be set in /etc/sysconfig/unplug-vcpus.

For this to take effect, you can either restart the host, or (only in the case where the number of VCPUs in dom0 is decreasing) run:

/etc/init.d/unplug-vcpus start

Changing the Number of Netback Threads in Dom0

By default, the number of netback threads in dom0 equals min(4,<number_of_vcpus_in_dom0>). Therefore, increasing the number of dom0 VCPUs above 4, will by default not increase the number of netback threads.

To increase the threshold number of netback threads to 12, write xen-netback.netback_max_groups=12 into /boot/extlinux.conf under section labelled xe-serial just after the assignment console=hvc0.

Enabling NIC Offloading

Please see the "Offloading some network processing to NICs" section above.

You can use ethtool to enable/disable NIC offloading.

ETH=eth6 # the conn. for which you want to enable offloading ethtool -k $ETH # check what is currently enabled/disabled ethtool -K $ETH gro on # enable GRO

Note that changing offload settings directly via ethool will not persist the configuration through host reboots; to do that, use other-config of the xe command.

xe pif-param-set uuid=<pif_uuid> other-config:ethtool-gro=on

Enabling Jumbo Frames

Suppose eth6 and xenbr6 are the device and the bridge corresponding to the 10 GiB/sec connection used.

Shut down user domains:

VMs=$(xe vm-list is-control-domain=false params=uuid --minimal | sed 's/,/ /g') for uuid in $VMs; do xe vm-shutdown uuid=$uuid; done

Set network MTU to 9000, and re-plug relevant PIFs:

net_uuid=`xe network-list bridge=xenbr6 params=uuid --minimal` xe network-param-set uuid=$net_uuid MTU=9000 PIFs=$(xe pif-list network-uuid=$net_uuid --minimal | sed 's/,/ /g') for uuid in $PIFs; do xe pif-unplug uuid=$uuid; xe pif-plug uuid=$uuid; done

Start user domains (you might want to make sure that VMs are started one after another to avoid potential VIF static allocation problems):

VMs=$(xe vm-list is-control-domain=false params=uuid --minimal | sed 's/,/ /g') for uuid in $VMs; do xe vm-start uuid=$uuid; done

Set up the connections you will use inside the user domains to use MTU 9000. For Linux VMs, this is done with:

ETH=eth1 # the user domain connection you are concerned with ifconfig $ETH mtu 9000 up

Verifying:

xe vif-list network-uuid=$net_uuid params=MTU --minimal

Linux TCP parameter settings

Default in Dom0

ETH=eth6 # the connection you are concerned with sysctl -w net.core.rmem_max=131071 sysctl -w net.core.wmem_max=131071 sysctl -w net.ipv4.tcp_rmem="4096 87380 3080192" sysctl -w net.ipv4.tcp_wmem="4096 16384 3080192" sysctl -w net.core.netdev_max_backlog=1000 sysctl -w net.ipv4.tcp_congestion_control=reno ifconfig $ETH txqueuelen 1000 ethtool -K $ETH gro off sysctl -w net.ipv4.tcp_timestamps=1 sysctl -w net.ipv4.tcp_sack=1 sysctl -w net.ipv4.tcp_fin_timeout=60

Default for a Demo Etch Linux VM

ETH=eth1 # the connection you are concerned with sysctl -w net.core.rmem_max=109568 sysctl -w net.core.wmem_max=109568 sysctl -w net.ipv4.tcp_rmem="4096 87380 262144" sysctl -w net.ipv4.tcp_wmem="4096 16384 262144" sysctl -w net.core.netdev_max_backlog=1000 sysctl -w net.ipv4.tcp_congestion_control=bic ifconfig $ETH txqueuelen 1000 ethtool -K $ETH gso off sysctl -w net.ipv4.tcp_timestamps=1 sysctl -w net.ipv4.tcp_sack=1 sysctl -w net.ipv4.tcp_fin_timeout=60

Recommended TCP settings for Dom0

Changing these settings in only relevant if you want to optimise network connections for which one of the end-points is dom0 (not a user domain). Using settings recommended for a user domain (VM) will work well for dom0 as well.

Recommended TCP settings for a VM

Bandwidth Delay Product (BDP) = Route Trip Time (RTT) * Theoretical Bandwidth Limit

For example, if RTT = 100ms = .1s, and theoretical bandwidth is 10Gbit/s, then:

BDP = (.1s) * (10 * 10^9 bit/s) = 10^9 bit = 1 Gbit ~= 2^30 bit = 134217728 B

ETH=eth6 # ESSENTIAL (large benefit) sysctl -w net.core.rmem_max=134217728 # BDP sysctl -w net.core.wmem_max=134217728 # BDP sysctl -w net.ipv4.tcp_rmem="4096 87380 134217728" # _ _ BDP sysctl -w net.ipv4.tcp_wmem="4096 65536 134217728" # _ _ BDP sysctl -w net.core.netdev_max_backlog=300000 modprobe tcp_cubic sysctl -w net.ipv4.tcp_congestion_control=cubic ifconfig $ETH txqueuelen 300000 # OPTIONAL (small benefit) ethtool -K $ETH gso on sysctl -w net.ipv4.tcp_sack=0 # for reliable networks only sysctl -w net.ipv4.tcp_fin_timeout=15 # claim resources sooner sysctl -w net.ipv4.tcp_timestamps=0 # does not work with GRO on in dom0

Checking existing settings:

ETH=eth6 sysctl net.core.rmem_max sysctl net.core.wmem_max sysctl net.ipv4.tcp_rmem sysctl net.ipv4.tcp_wmem sysctl net.core.netdev_max_backlog sysctl net.ipv4.tcp_congestion_control ifconfig $ETH | grep -o "txqueuelen:[0-9]\+" ethtool -k $ETH 2> /dev/null | grep "generic.segmentation.offload" sysctl net.ipv4.tcp_timestamps sysctl net.ipv4.tcp_sack sysctl net.ipv4.tcp_fin_timeout

Pinning a VM to specific CPUs

While this does not necessarily improve performance (it can easily make performance worse, in fact), it is useful when debugging CPU usage of a VM. To assign a VM to CPUs 3 and 4, run the following in dom0:

xe vm-param-set uuid=<vm-uuid> VCPUs-params:mask=3,4

Switching between Linux Bridge and Open VSwitch

To see what network backend you are currently using, run in dom0:

cat /etc/xensource/network.conf

To switch to using the Linux Bridge network backend, run in dom0:

xe-switch-network-backend bridge

To switch to using Open vSwitch network backend, run in dom0:

xe-switch-network-backend openvswitch

Enabling/disabling IOMMU

This is, in fact, not a tweak, but a requirement when using SR-IOV (see below).

Some versions of Xen have IOMMU enabled by default. If disabled, you can enable it by editing /boot/extlinux.conf, and adding iommu=1 to Xen parameters (i.e. just before the first --- of your active configuration). If enabled by default, you can disable it by using iommu=0, instead.

Enabling SR-IOV

Make sure that IOMMU is enabled in the version of Xen that you are running --- see section above.

In dom0, use lspci to display a list of Virtual Functions (VFs). For example,

07:10.0 Ethernet controller: Intel Corporation 82559 Ethernet Controller Virtual Function (rev 01)

In the example above, 07:10.0 is the bus:device.function address of the VF.

Assign a free (non-assigned) VF to the target VM by running:

xe vm-param-set other-config:pci=0/0000:<bus:device.function> uuid=<vm-uuid>

(Re-)Start the VM, and install the appropriate VF driver (inside your VM) for your specific NIC.

You can assign multiple VFs to a single VM; however, the same VF cannot be shared across multiple VMs.

Acknowledgements

While this guide was mostly written by Rok Strniša, it could not have been nearly as good without the help and advice from many of his colleagues, including (in alphabetic order) Alex Zeffertt, Dave Scott, George Dunlap, Ian Campbell, James Bulpin, Jonathan Davies, Lawrence Simpson, Marcus Granado, Mike Bursell, Paul Durrant, Rob Hoes, Sally Neale, and Simon Rowe.