Xen-netback NAPI + kThread V1 performance testing

Contents

- 1 Xen-netback NAPI + kThread V1 performance testing

- 1.1 Test Description

- 1.2 Interpreting the plots

- 1.3 dom0 to Debian VM

- 1.4 dom0 to Debian VM (Split event channels)

- 1.5 Analysis of VM Rx (dom0 to Debian)

- 1.6 Debian VM to dom0 (IRQs manually distributed)

- 1.7 Debian VM (Split event channels) to dom0 (IRQs manually distributed)

- 1.8 Analysis of VM Tx (Debian to dom0)

Xen-netback NAPI + kThread V1 performance testing

Test Description

- VM template Debian contains Debian Wheezy (7.0) 64-bit with no kernel modifications; iperf installed. This was cloned 8 times into Debian-$N

- VM template Split contains Debian Wheezy (7.0) 64-bit with a modified xen-netfront to support split event channels. This was cloned 8 times into Split-$N

- Tests were performed using iperf with traffic flowing from dom0 to N domU, and from N domU to dom0 (see individual sections below for results).

- iperf sessions ran for 120 seconds each and were repeated three times, for each combination of 1 to N domU instances and 1 to 4 iperf threads. Results were stored in a serialised Python list format for later analysis.

- Analysis scripts automatically produced the plots below from the stored results.

- The domU instances were shut down between each test repeat (but not when the number of iperf threads was changed) to ensure that the results are comparable and uncorrelated.

- No attempt was made to modify the dom0 configuration to improve performance beyond that of the default (XenServer) settings

- dom0 was running a 32-bit 3.6.11 kernel

- The host has 12 cores on two sockets, across two nodes, with Hyperthreading enabled. The CPUs are Intel Xeon X5650 (2.67 GHz).

- dom0 has 2147483648 bytes memory (2048 MB)

- VMs have 536870912 bytes memory each (512 MB)

- VMs have two VCPUs, dom0 has six VCPUs

Interpreting the plots

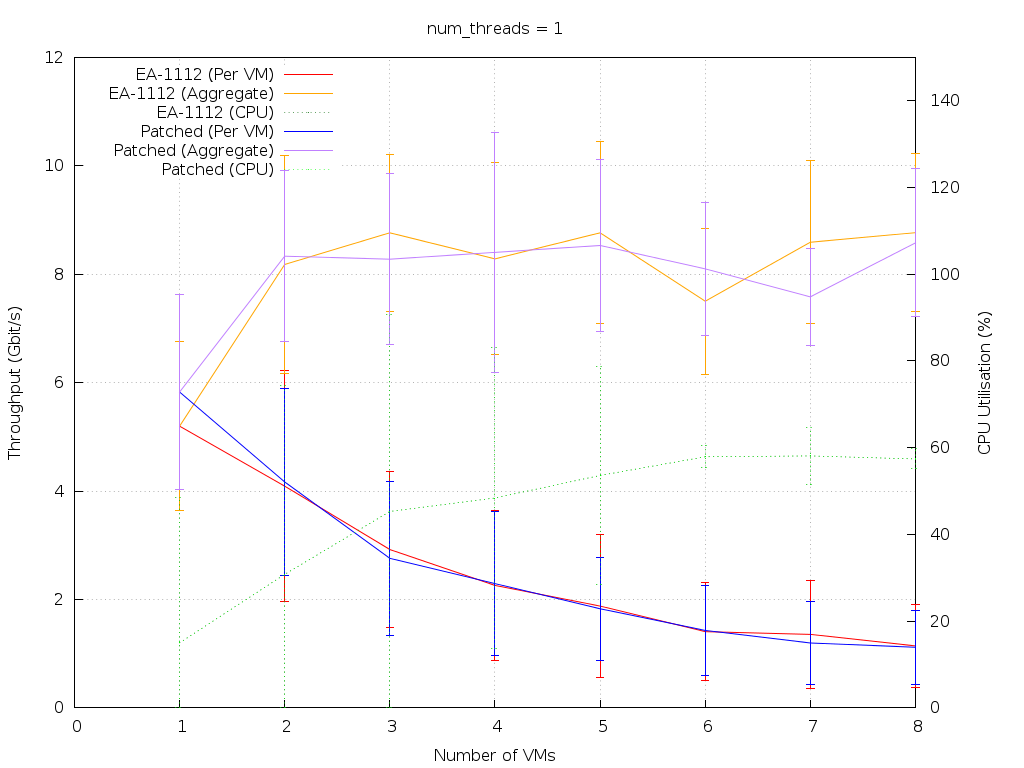

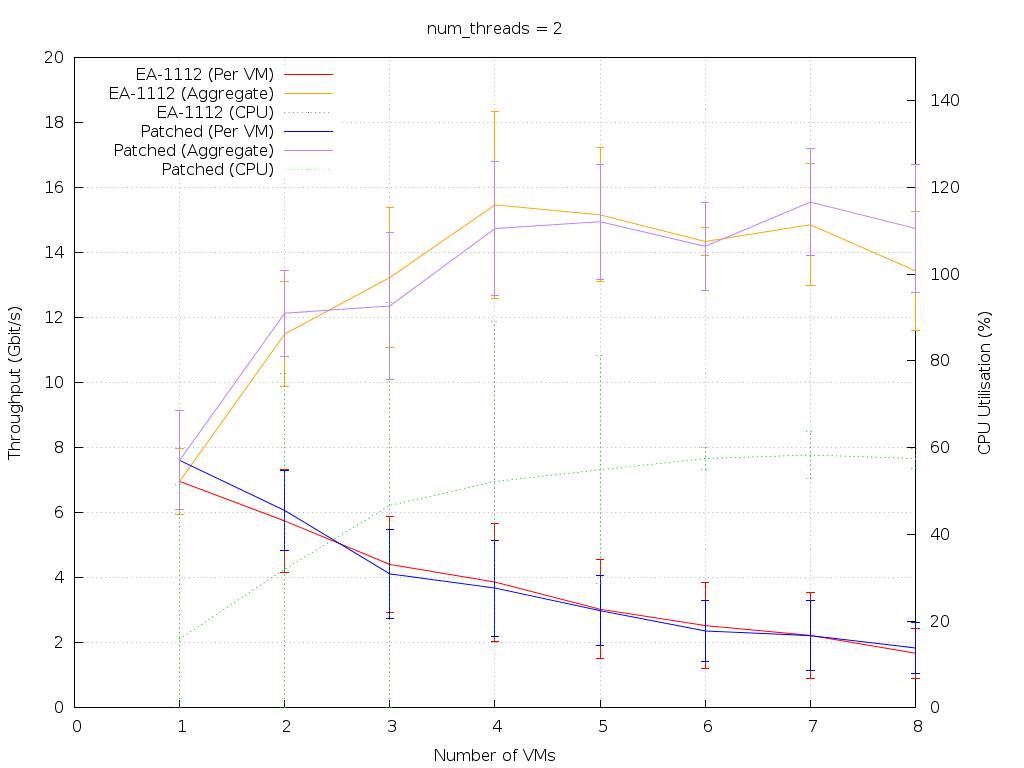

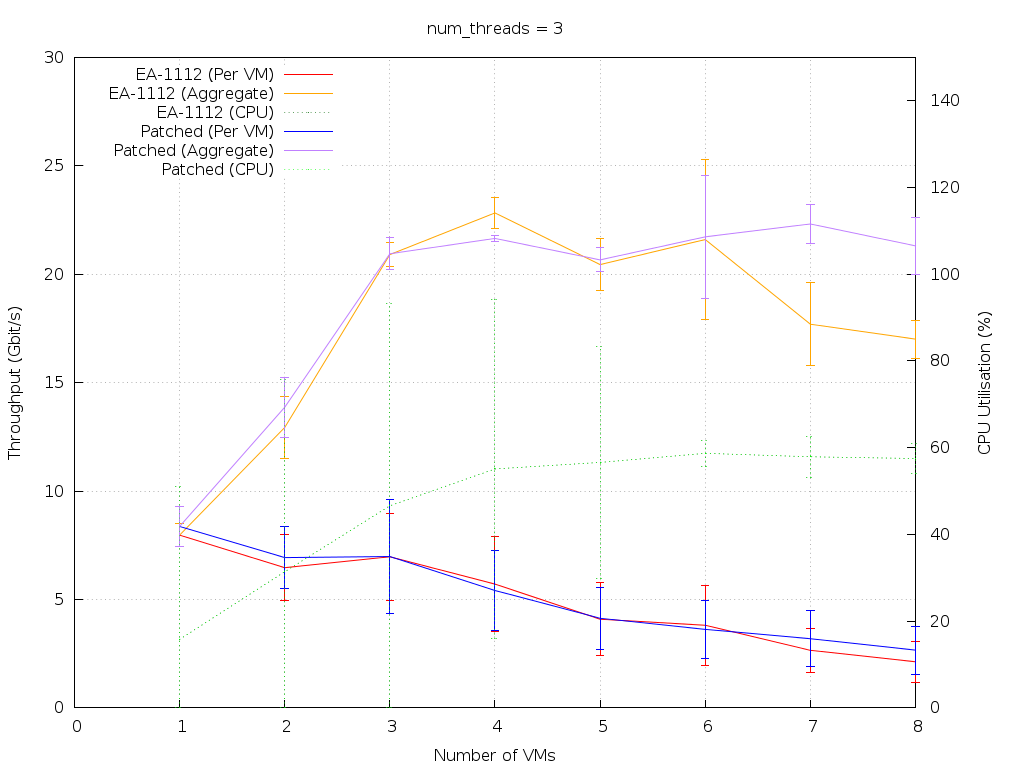

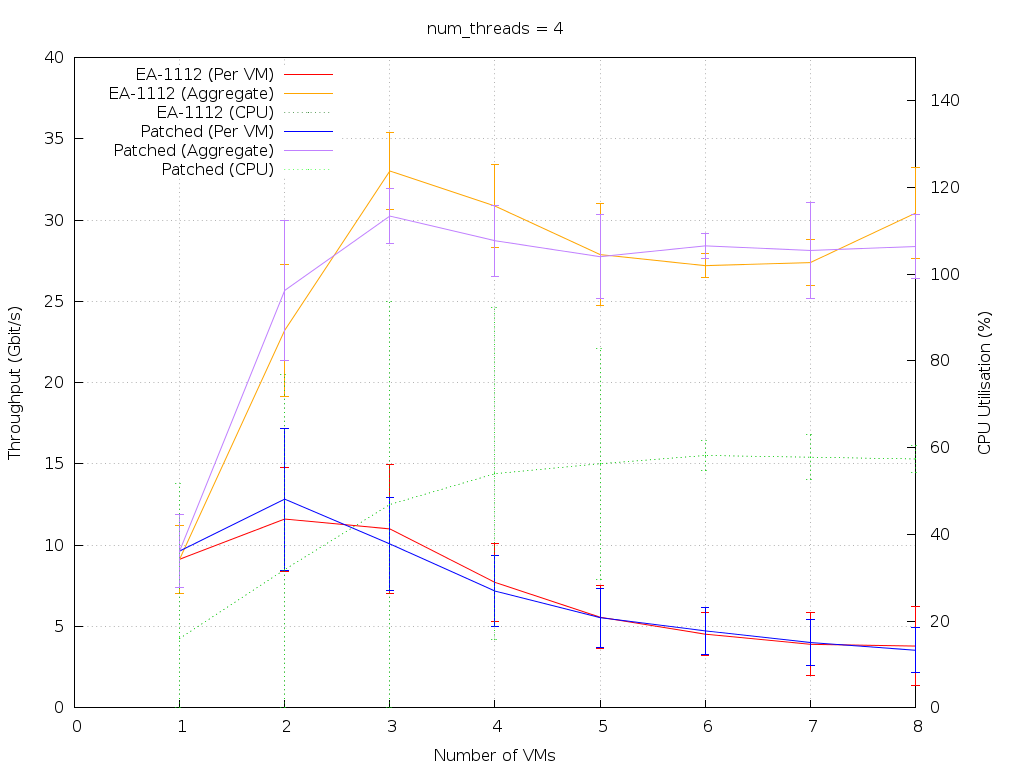

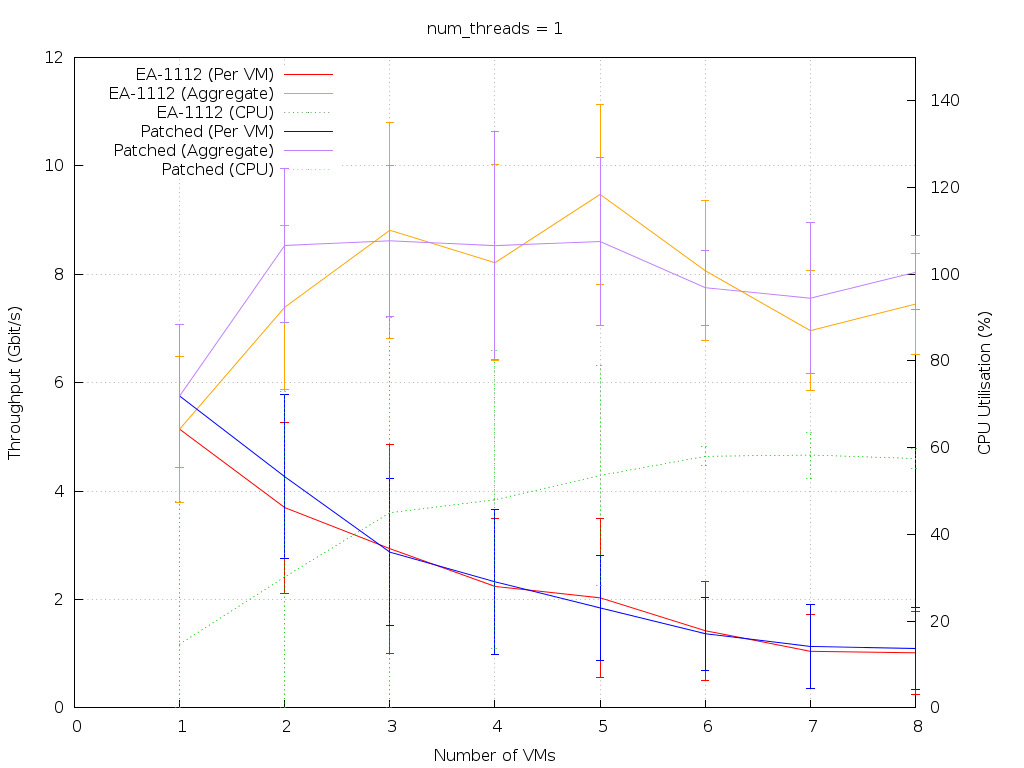

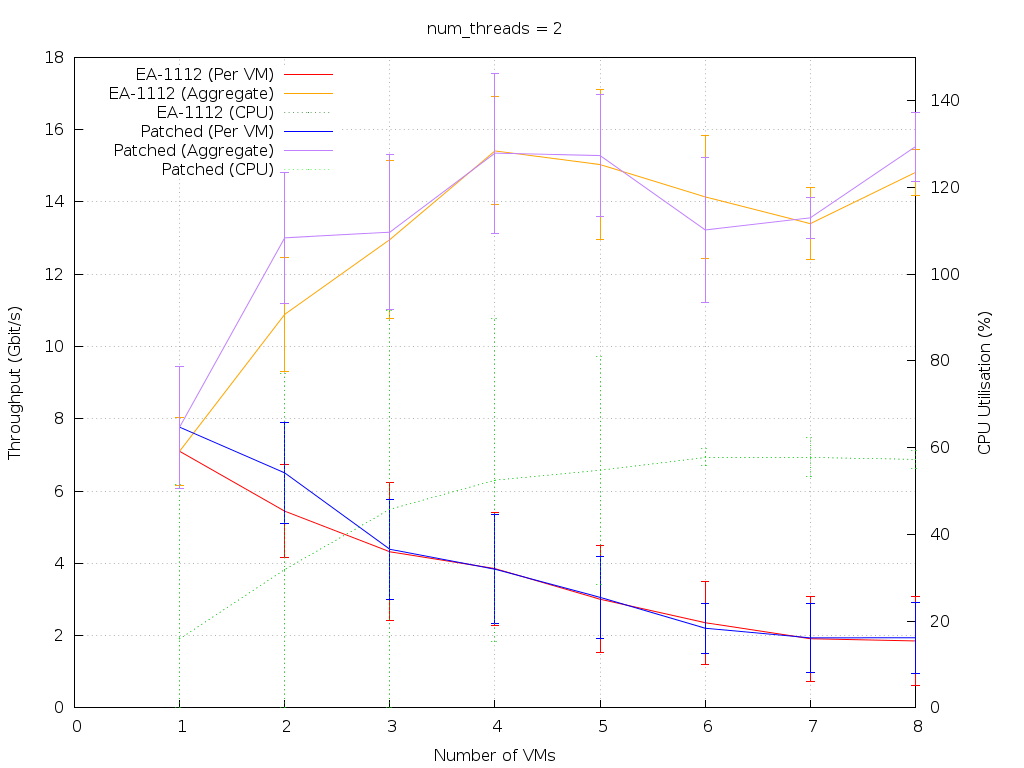

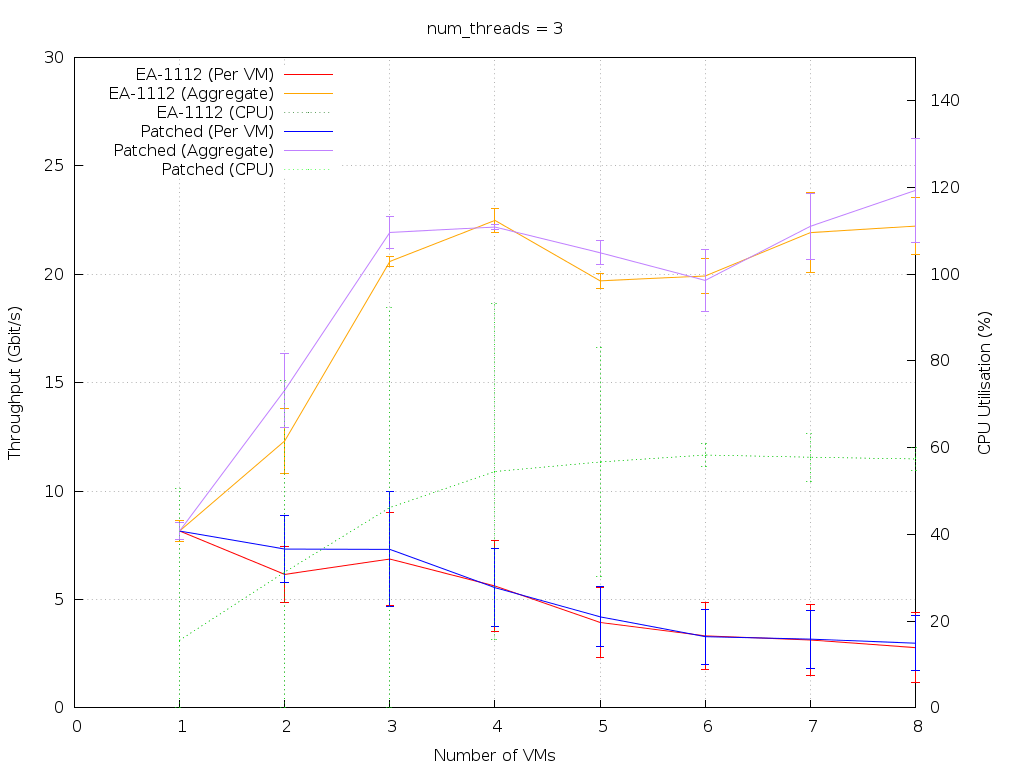

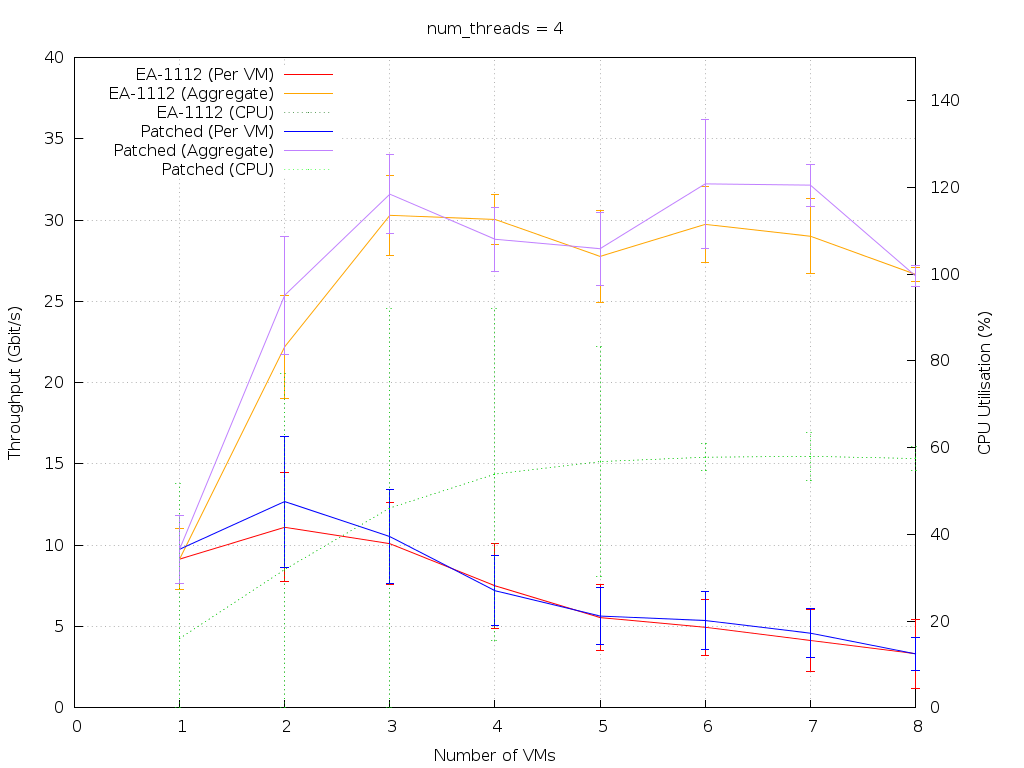

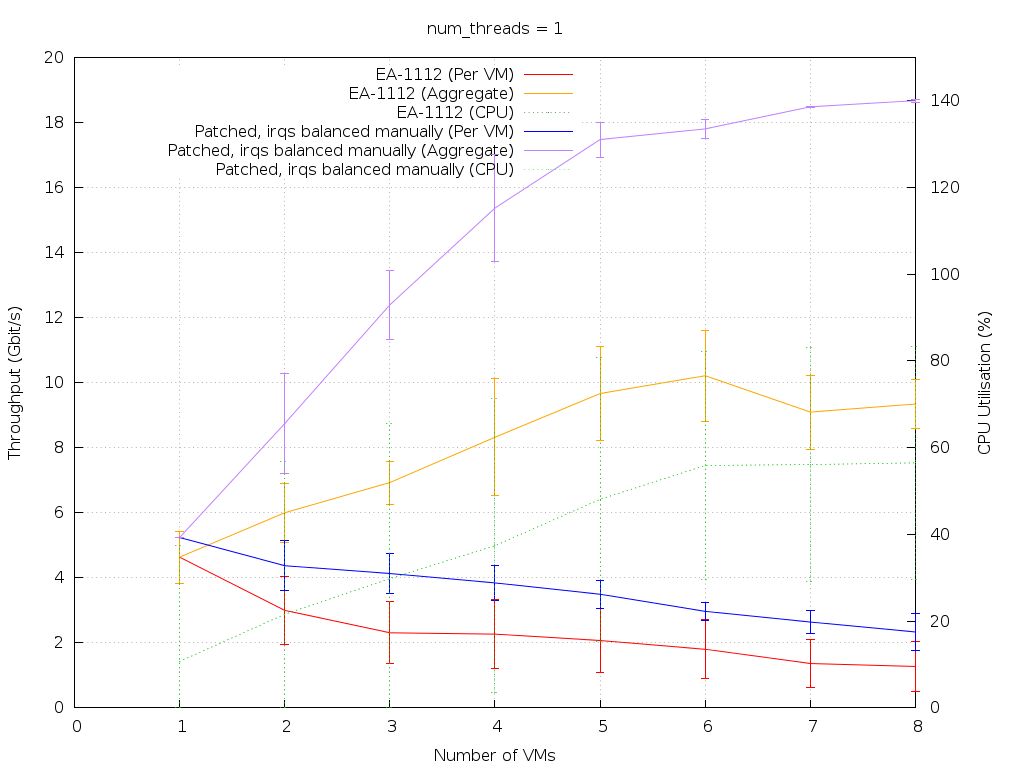

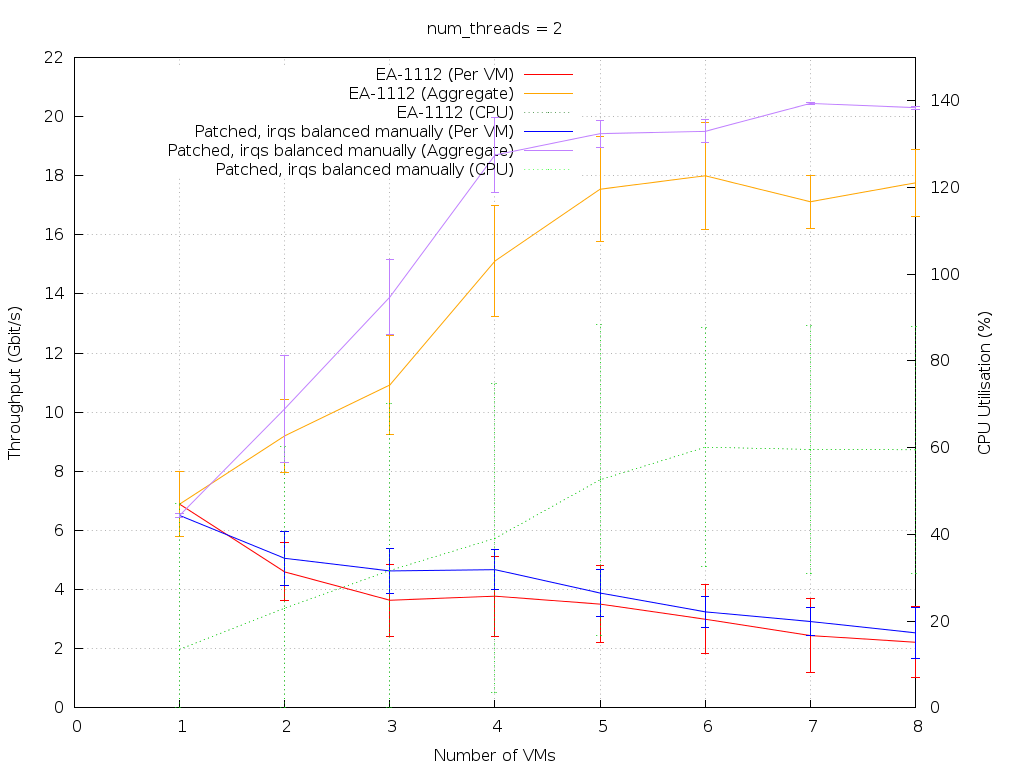

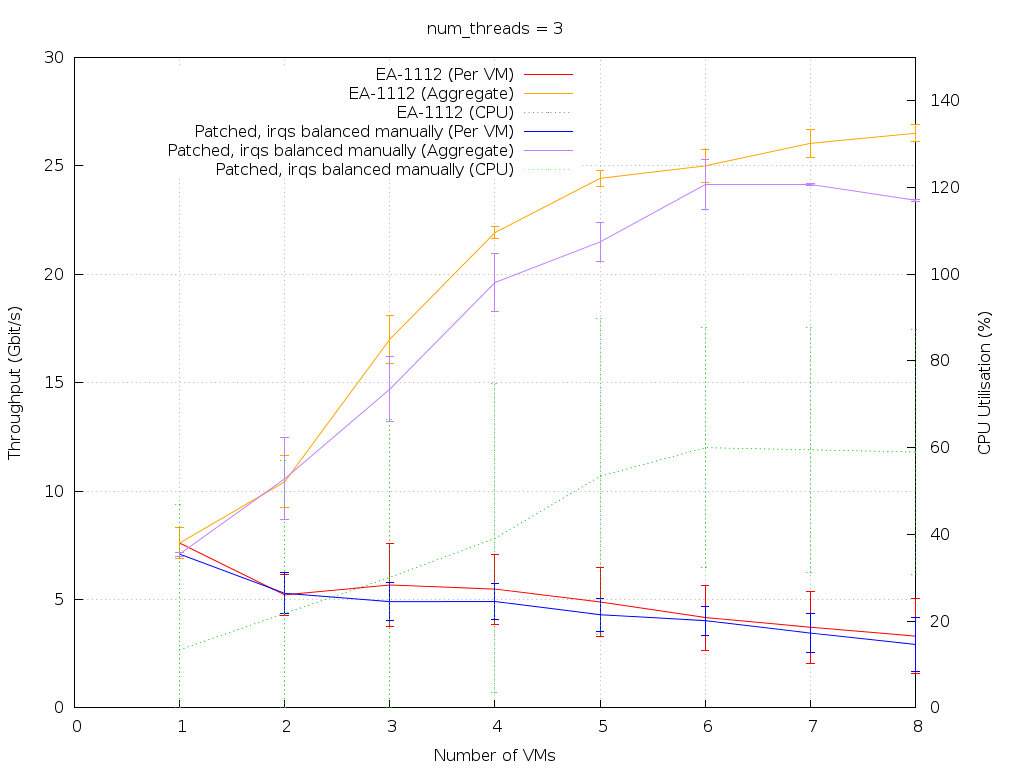

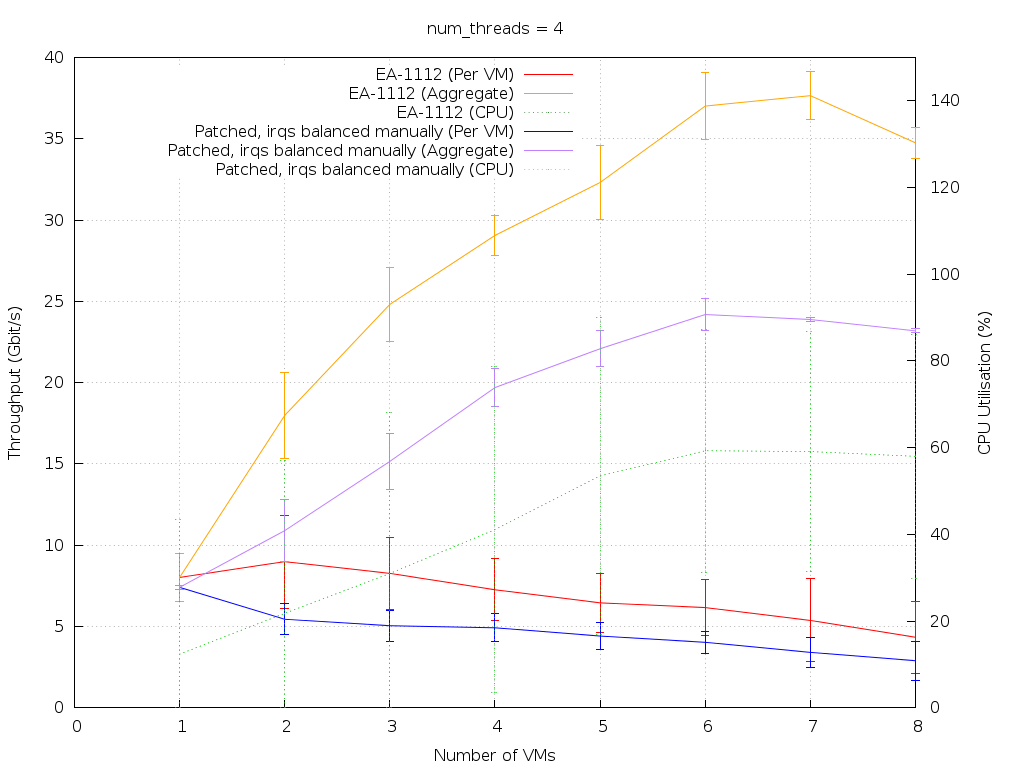

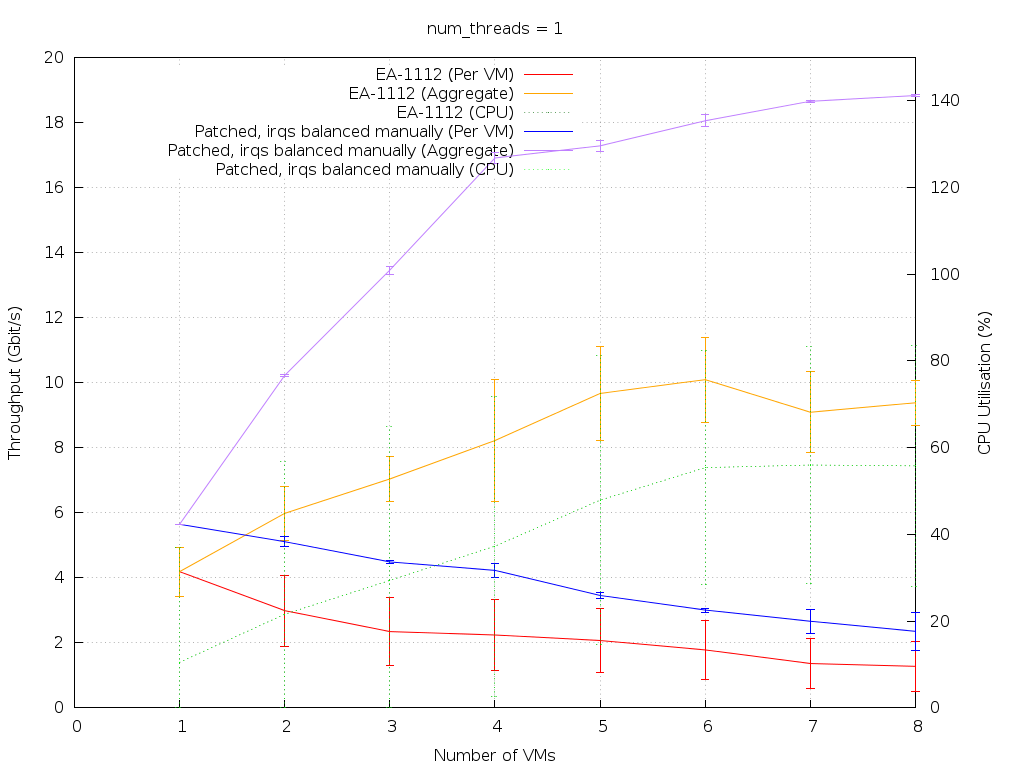

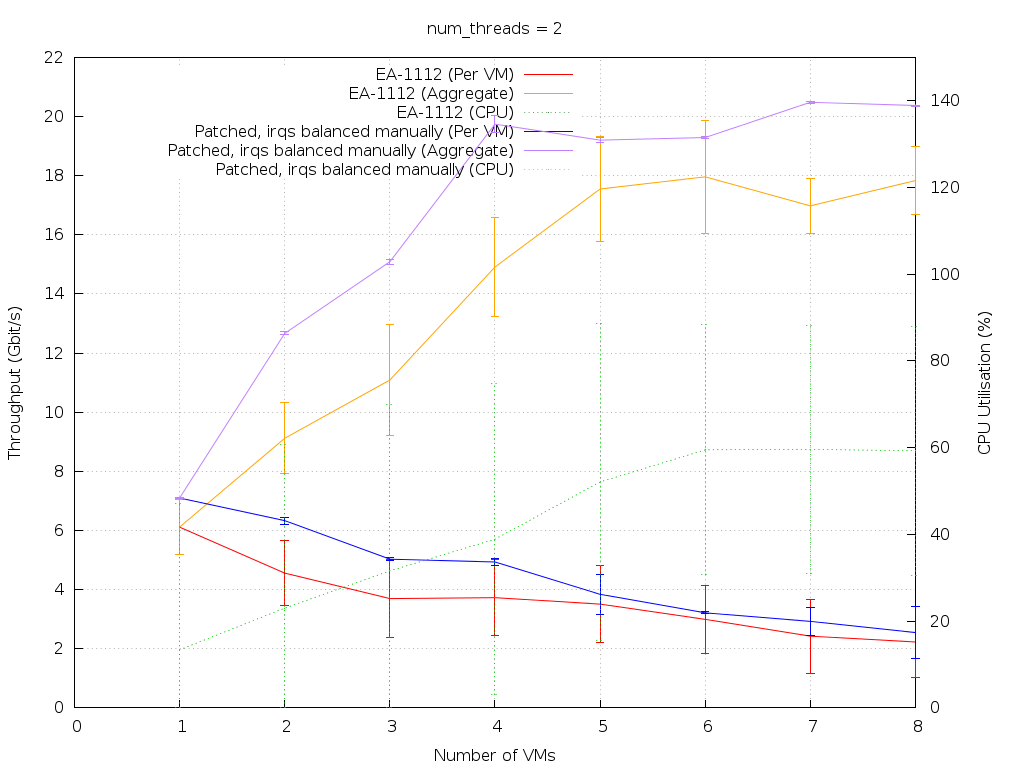

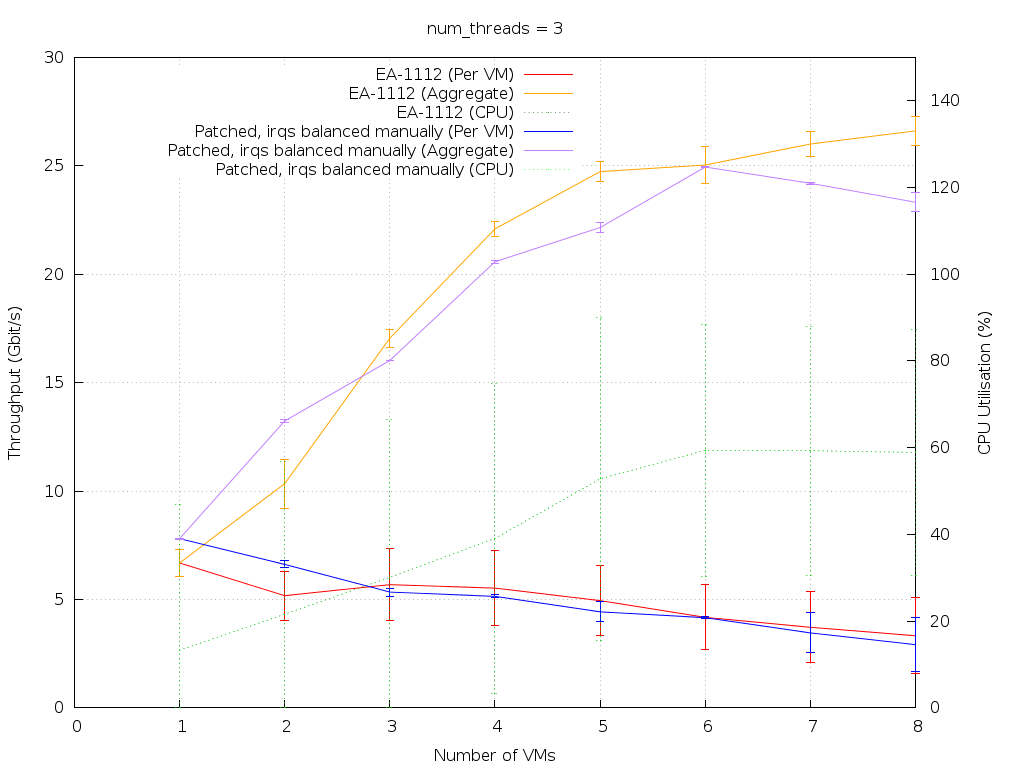

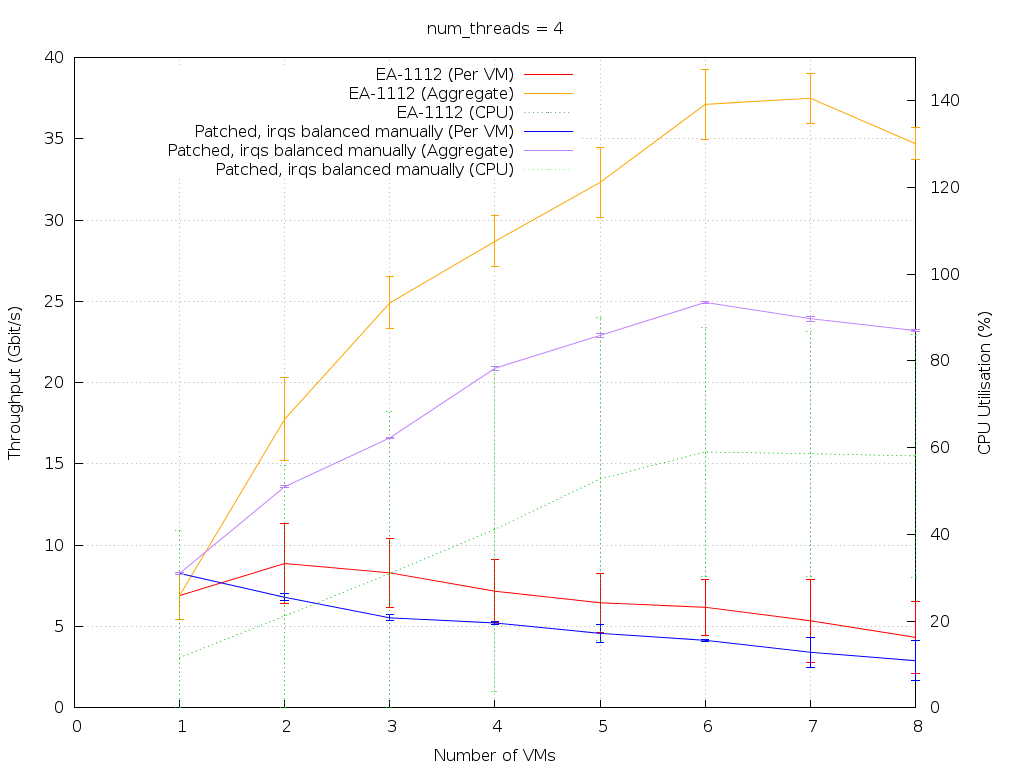

On the plots below, red and orange lines refer to the plain kernel tests, while blue and purple lines refer to the patched version. The dotted green lines show CPU usage for both.

The x-axis is number of VMs, from 0 to 8 (data starts at N=1). The left-hand y-axis is throughput in Gbit/s, corresponding to the red, orange, blue and purple lines. The right-hand y-axis is CPU usage (as a percentage of one dom0 VCPU), corresponding to the green lines. Error bars are ± 1 standard deviation.

For each test, four plots are shown, corresponding to tests run with between 1 and 4 iperf threads (1 iperf thread = 1 TCP stream).

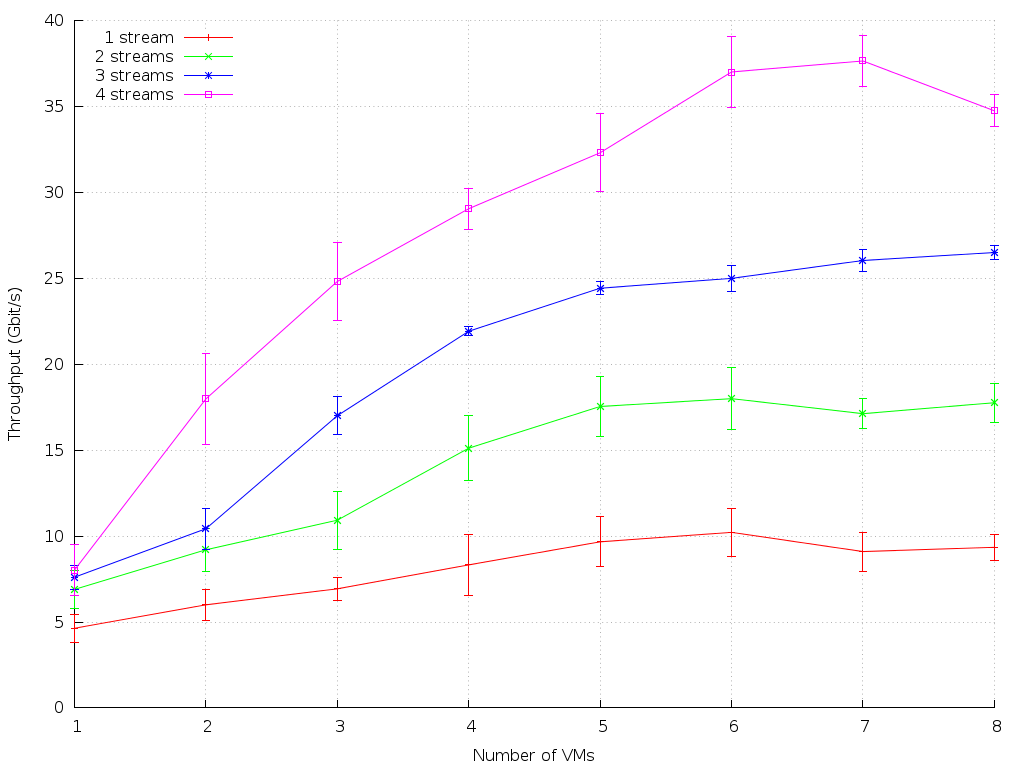

dom0 to Debian VM

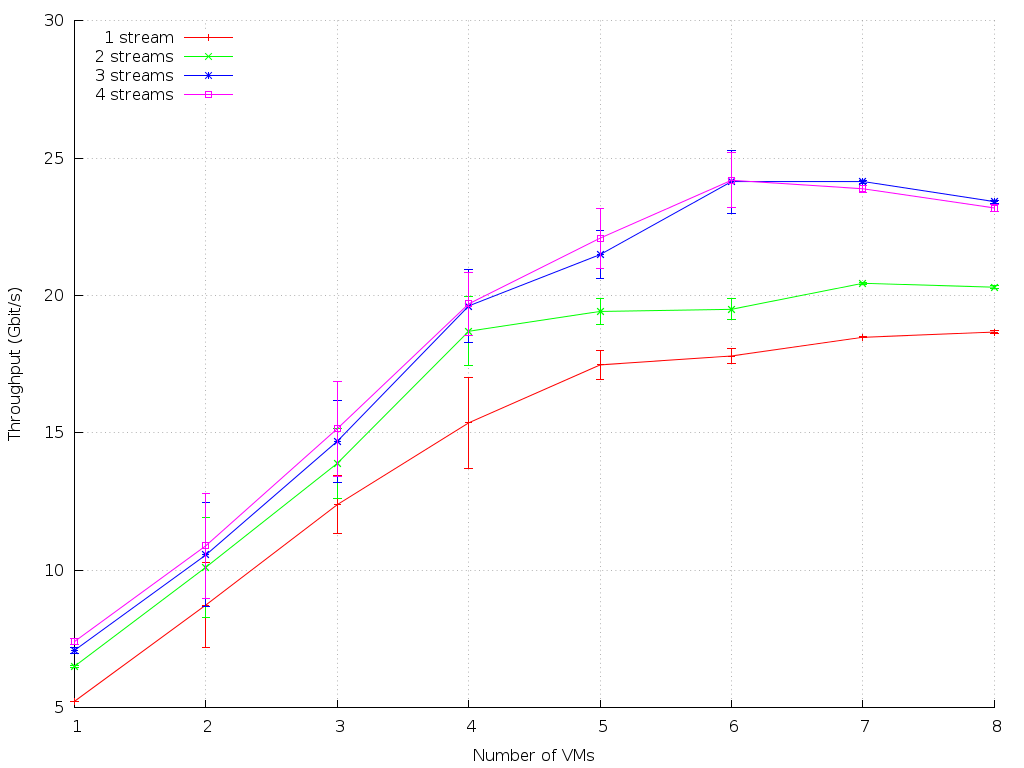

dom0 to Debian VM (Split event channels)

Analysis of VM Rx (dom0 to Debian)

The results for network traffic from dom0 into a VM show that there is no significant change introduced by the patches in terms of throughput or CPU usage. The error bars are typically smaller for the results with the patches applied, which is expected due to the improved fairness obtained by using a kthread per VIF. The bulk of the work is still done in a kthread, however, so significant differences were not expected and have not been seen in these results.

Debian VM to dom0 (IRQs manually distributed)

Debian VM (Split event channels) to dom0 (IRQs manually distributed)

Analysis of VM Tx (Debian to dom0)

For a single iperf thread, the patched netback with distributed IRQs outperforms the original netback. Adding more iperf threads closes this gap, so it looks like the aggregate curve is now mostly fixed by the interrupt handling rather than by buffer-related issues that will vary with different numbers of TCP streams (see the plots below). This is good, since it indicates that it should scale with available CPU capacity. In the four-thread case, however, the patched netback still significantly underperforms compared to the original. This warrants further investigation.