Xen-netback and xen-netfront multi-queue performance testing

The information in this page is out-dated. It doesn't reflect the latest performance of Xen network drivers.

Please have a look at https://events.linuxfoundation.org/sites/events/files/slides/zoltan_kiss_xensummit2014_network_perf.pdf for latest information.

Contents

Overview

This page discusses the performance of an implementation of multiple transmit and receive queues (multiple shared rings) for xen-netback and xen-netfront. Packets are steered to a queue based on a hash of their L4 properties (TCP source and destination port, IP source and destination address), or delivered to queue 0 otherwise. All other transmit and receive processing remains identical to before.

Inter-host Performance

Test Environment

A 64-bit Debian Wheezy VM with 4 VCPUs and 2 GB RAM was installed onto a XenServer host with an updated kernel including the multi-queue patches, based on an RC of linux 3.13. The Debian kernel was replaced with a similar 3.13 RC containing the multi-queue netfront patches. The host itself has 12 CPU cores (+hyperthreading) and dom0 was allocated 8 VCPUs and 2 GB RAM. A non-debug Xen build was used, and no further host configuration was performed (in particular, there was no pinning of VCPUs to PCPUs). A second host, identical to the first, had a 64-bit Debian Wheezy installation on baremetal. Both hosts have Intel Xeon X5650 CPUs running at 2.67 Hz, and 36 GB physical memory.

The two hosts are connected to each other via 10 Gbit/s NICs.

Tests were performed using iperf to generate TCP traffic in the required direction. In both cases, the window size and buffer size were specified at 256k as parameters to iperf. The session was allowed to run for 60 seconds, reporting throughput at 5 second intervals. The number of TCP streams was varied between 1 and 8:

iperf -c <destination-ip> -w 256k -l 256k -t 60 -i 5 -P <num-tcp-streams>

Each test was repeated 4 times before increasing the number of TCP streams in the pattern 1, 2, 4, 8. The VM was rebooted after each sequence of tests (1–8 streams) and the number of queues was increased in the same pattern. The procedure was repeated with TCP streams in the opposite direction in order to cover guest receive and guest transmit.

Analysis Procedure

Throughput measurements for each test were combined into a mean and standard deviation at each point of interest, yielding four datasets. Each dataset represents throughput as a function of number of TCP streams, distinguished by the number of queues (ring pairs) in use. Datasets are plotted as mean values, with error bars representing the standard deviation at each point.

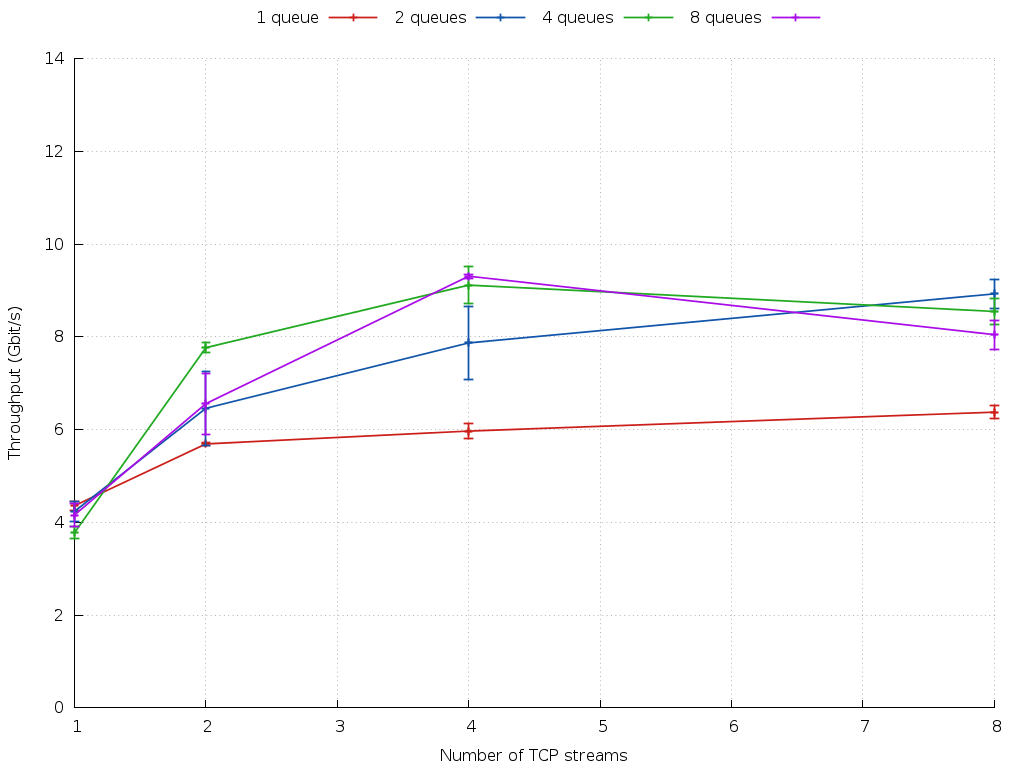

Results: VM Transmit

With a single TCP stream, all four lines start at approximately the same value, indicating no significant overhead to having multiple queues when only a single active TCP stream is present. The multi-queue lines (blue, green, purple) demonstrate significant increase in aggregate throughput when multiple TCP streams are active, compared to the throughput of a single queue. The single queue data rate flattens out around 6.25 Gbit/s, while with 4 and 8 queues it is possible to reach 9.4 Gbit/s, i.e. the line rate of a 10 Gbit/s NIC.

Some systematic uncertainty exists due to the distribution of TCP streams across queues. For example, with four streams and four queues, the split could be 4+0, 3+1, 2+2, 2+1+1 or 1+1+1+1. In the absence of detailed information about this distribution, for each test, the data presented shows a real-world scenario where the queue utilisation may not be ideal at all times. Artificially enforcing perfect balancing may improve aggregate throughput.

The results are also affected by the distribution of xenvif interrupts (event channels) across dom0 VCPUs; the strategy used here was to pair the tx and rx interrupts for each queue on the same VCPU, but distribute those for different queues across VCPUs manually. No VCPU pinning was used, so VCPUs may still migrate across physical CPUs in the system.

A further constraint was the lack of cores in the test system; while dom0 had 8 VCPUs, the VM only had 4 at its disposal. This is likely to be the cause of the drop in aggregate throughput with 8 TCP streams for the 4- and 8-queue data, i.e. the system has reached the point where TCP traffic generation and frontend processing is using all of the available guest VCPU capacity.

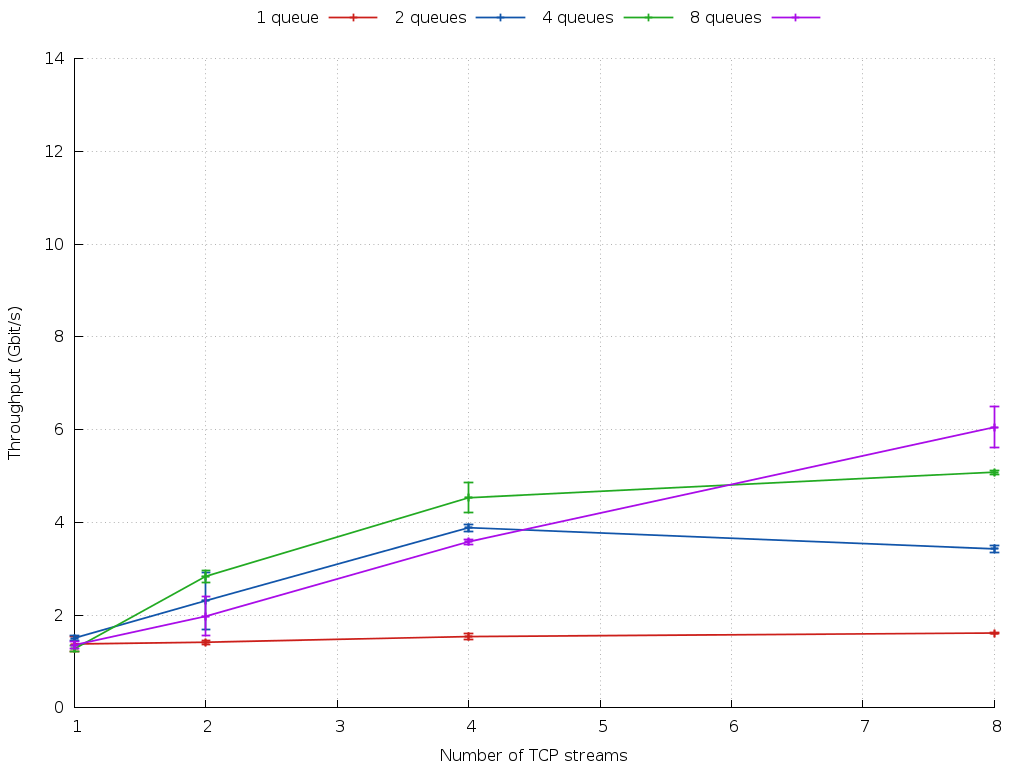

Results: VM Receive

With a single TCP stream, all four lines once again begin at approximately the same value, indicating no significant overhead when processing a single stream. The multi-queue lines (blue, green, purple) once again demonstrate significant increase in aggregate throughput when multiple TCP streams are active, compared to the throughput for a single queue (red line). Receiving network packets into a VM is known to be slower than transmitting from a VM, and with a single queue the aggregate throughput is limited to around 1.8 Gbit/s. It is possible to reach an aggregate throughput of 6 Gbit/s with eight queues and eight streams.

Once again, systematic uncertainty from the distribution of streams across available queues exists, as described above. The results are again affected by the distribution of interrupts across VCPUs, and by the lack of available physical cores. In particular, there was no attempt to balance guest-side interrupts across its VCPUs. It should finally be noted that these measurements were made with GRO turned off. It should be possible to achieve higher aggregate throughput with GRO enabled.

Intra-host VM ⇔ VM Performance

Test Environment

Two 64-bit Debian Wheezy VMs were installed onto a host based on a XenServer installation with an updated 3.13 RC kernel containing the multi-queue netback patches. The Debian kernels were updated to include the multi-queue netfront patches. Each VM had 16 VCPUs and 4 GB RAM. dom0 was configured with 8 VCPU and 2 GB RAM (due to limitations in the 32-bit kernel, it was not possible to configure 16 VCPUs in dom0).

The host was a Haswell-class Intel Xeon system with 56 cores, each running at 2.2 GHz. It has 32 GB RAM.

Tests were performed using iperf between the two VMs, with parameters set as for the inter-host tests described above. Since this setup is symmetric, tests were carried out one-way only (i.e. from VM2 to VM1).

Analysis proceeded as described above.

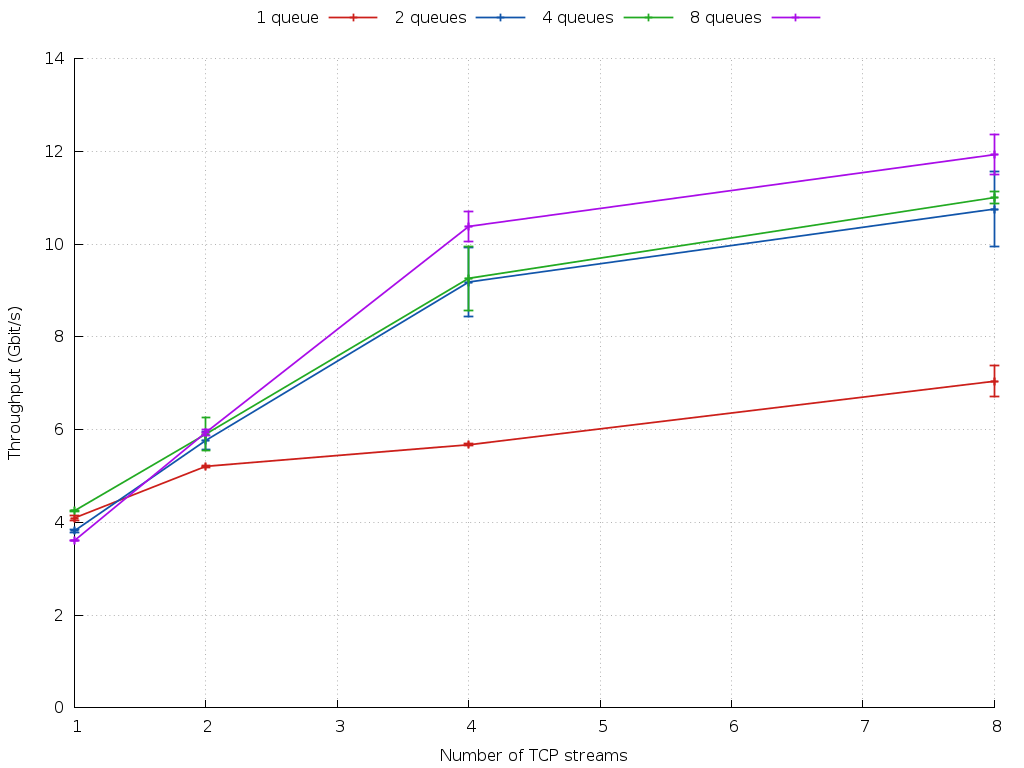

Results: VM ⇔ VM

Without the limitations imposed by a 10 Gbit/s NIC, and with sufficient CPUs to give each queue a VCPU in both the guest and in dom0, it becomes clear that increasing the number of queues always results in an increase in aggregate throughput when multiple TCP streams are used. In the above plot, there is a clear benefit to having two or more queues when two or more TCP streams are active, although the gap between 2 and 8 queues appears to be smaller. It is likely that the TCP streams are not ideally balanced across queues, which will tend to reduce the apparent scaling. Comparing the 8-queue, 8-stream data point with the 1-queue, 8-stream point, it becomes clear that this multi-queue implementation delivers a 71% improvement in aggregate throughput. (i.e. 171% of the single queue throughput).

Conclusions

In all cases where multiple TCP streams were present, using more than one pair of shared rings (i.e. multiple queues) delivers an improvement in aggregate throughput. The precise nature of this improvement depends strongly on the environment, in particular the availability of distinct CPUs for processing, and will vary from system to system. In particular, the results above show that it is possible for a Linux guest to saturate a 10 Gbit/s physical NIC using eight TCP streams and four or eight queues on its virtual interface.