Difference between revisions of "Xen on NUMA Machines"

m (→What is NUMA ?: --- Added the output of `xl info -n') |

m (→Future Developments: --- Added info about very latest development) |

||

| Line 103: | Line 103: | ||

= Future Developments = |

= Future Developments = |

||

| − | [http://lists.xen.org/archives/html/xen-devel/2012-04/msg00732.html Preliminary patches] |

+ | [http://lists.xen.org/archives/html/xen-devel/2012-04/msg00732.html Preliminary patches] introducing support for automatic placement in xl and NUMA-aware scheduling were been posted to the xen-devel mailing list a while back. The results of some (preliminary as well, of course) benchmarks have been discussed in [http://blog.xen.org/index.php/2012/04/26/numa-and-xen-part-1-introduction/ this] and [http://blog.xen.org/index.php/2012/05/16/numa-and-xen-part-ii-scheduling-and-placement/ this] Blog posts (and in [[Xen_NUMA_Benchmarks|this]] and [[Xen_Numa_Scheduling_and_Placement|this]] Wiki articles). |

| + | |||

| + | Xen 4.2 contains a slightly modified version of that placement algorithm, more specifically, the one implemented by [http://lists.xen.org/archives/html/xen-devel/2012-07/msg01255.html this patch series]. Keeping improving it, as well as continuing adding NUMA related features will happen throughout the Xen 4.3 development cycle (so keep yourself posted!). |

||

[[Category:Performance]] |

[[Category:Performance]] |

||

Revision as of 10:16, 31 July 2012

Contents

Introduction

What is NUMA ?

Having to deal with a Non-Uniform Memory Access (NUMA) machine is becoming more and more common. This is true no matter whether you are part of an engineering research center with access to one of the first Intel SCC-based machines, a virtual machine hosting provider with a bunch of dual 2376 AMD Opteron pieces of iron in your server farm, or even just a Xen developer using a dual socket E5620 Xeon based test-box. Just very quickly, NUMA means the memory accessing times of a program running on a CPU depends on the relative distance between that CPU and that memory. In fact, most of the NUMA systems are built in such a way that each processor has its local memory, on which it can operate very fast. On the other hand, getting and storing data from and on remote memory (that is, memory local to some other processor) is quite more complex and slow.

To check whether or not your machine is NUMA, try tthe xl info -n command. Here it is (some of) what it says on a 2 NUMA nodes machine with 8 cores each (pCPUs 0-7 ==> Node #0, pCPUs 8-15 ==> Node #1):

# xl info -n host : Zhaman release : 3.3.4-5.fc17.x86_64 version : #1 SMP Mon May 7 17:29:34 UTC 2012 machine : x86_64 nr_cpus : 16 nr_nodes : 2 cores_per_socket : 4 threads_per_core : 2 ... total_memory : 12285 free_memory : 706 free_cpus : 0 cpu_topology : cpu: core socket node 0: 0 1 0 1: 0 1 0 2: 1 1 0 3: 1 1 0 4: 9 1 0 5: 9 1 0 6: 10 1 0 7: 10 1 0 8: 0 0 1 9: 0 0 1 10: 1 0 1 11: 1 0 1 12: 9 0 1 13: 9 0 1 14: 10 0 1 15: 10 0 1 numa_info : none xen_major : 4 xen_minor : 1 xen_extra : .2 ...

Why Caring ?

NUMA awareness becomes very important as soon as many domains start running memory-intensive workloads on a shared host. In fact, the cost of accessing non node-local memory locations is very high, and the performance degradation is likely to be noticeable.

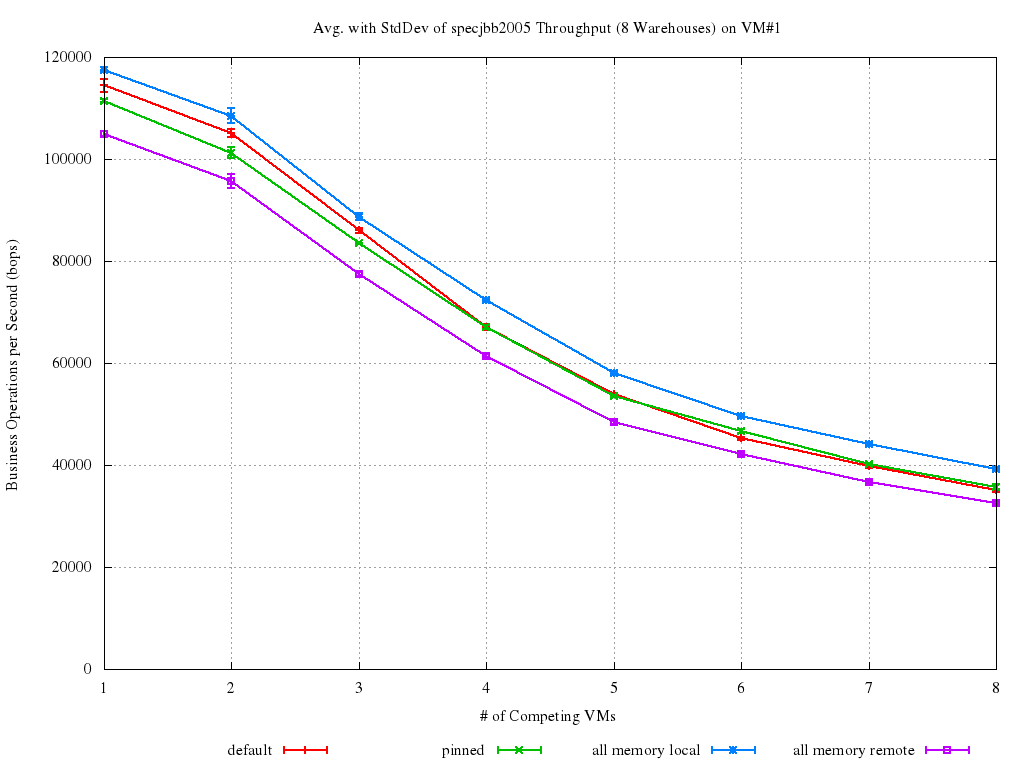

For example, let's look at a memory-intensive benchmark with a bunch of competing VMs running concurrently on a NUMA host. The plots below show what happened in such a case on a 2 nodes system.

The benchmark is SpecJBB2005, under the assumption that it will generate quite a bit of stress on the memory subsystem, which turned out to be the case. Host is a 16 CPUs, 2-NUMA nodes, Xeon based system with 12GB RAM (2GB of which reserved for Dom0). Linux kernel for dom0 was 3.2, Xen was xen-unstable at the time of the benchmarking (i.e., more or less Xen 4.2). Guests have 4 vCPUs and 1GB of RAM each. Numbers come from running the benchmark on an increasing (1 to 8 ) number of Xen PV-guests at the same time, and repeating each run 5 times for each of the VMs configurations below:

- default is the defaul Xen and xl behaviour without any vCPU pinning at all;

- pinned means VM#1 was pinned on NODE#0 after being created. This implies its memory was striped on both the nodes, but it can only run on the fist one;

- all memory local (best case) means VM#1 was created on NODE#0 and kept there. That implies all its memory accesses are local, and we thus call it the the best case;

- all memory remote (worst case) means VM#1 was created on NODE#0 and then moved (by explicitly pinning its sCPUs) on NODE#1. That implies all its memory accesses are remote, and we thus call it the worst case.

In all the experiments, it is only VM#1 that was pinned/moved. All the other VMs have their memory "striped" between the two nodes and are free to run everywhere. The final score achieved by SpecJBB on VM#1 is reported below. As SpecJBB output is in terms of "business transactions per second (bops)", higher values correspond to better results.

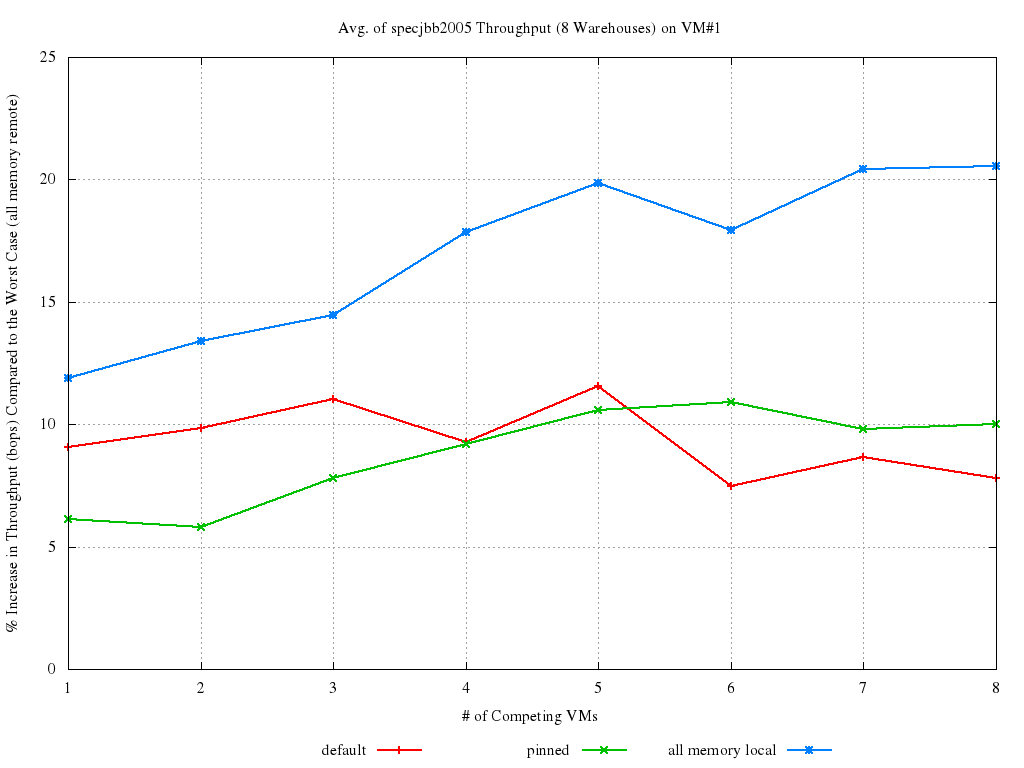

First of all, notice how small the standard deviation is for all the runs: this just confirms SpecJBB is a good benchmark for our purposes. The most interesting lines to look at and compare are the red and the blue ones. Evidence is there that things can improve quite a bit, even on such a small box, especially in presence of heavy load (6 to 8 VMs). Look also at the percent increase in performance of each run with respect to the worst case (all memory remote):

This second graph makes even more clear how NUMA placement is accountable for a ~10% to 20% (depending on the load) impact on performance. The default Xen behavior is certainly not as bad as it could be: default almost always manage in getting ~10% better performance than the worst case. Also, although pinning can help in keeping performance consistent, it doesn't always yield an improvement (and when it does, it is only by few percent points). There is a ~10% performance increase to gain (and even more, in heavy loaded cases), if we manage in getting default to be close enough to all memory local, and that is why we should care about NUMA (The full set of results, with plots about all the statistical properties of the data can be found here).

NUMA and Xen

The Xen hypervisor already deals with NUMA in a number of ways. For example, each domain has its own node affinity, which is the set of NUMA nodes of the host from which memory for that domain is allocated (in equal parts). That becomes very important as soon as many domains start running memory-intensive workloads on a shared host. In fact, as soon as the majority of the memory accesses become remote, the degradation in performance is likely to be noticeable. An effective technique to deal with this architecture in a virtualization environment is virtual CPU (vCPU) pinning. In fact, if a domain can only run on a subset of the host’s physical CPUs (pCPUs), it is very easy to turn all its memory accesses into local ones.

Actually, this is exactly what Xen does by default. At domain creation time, it constructs the domain's node affinity basing on what nodes the pCPUs to which the domain's vCPUs are pinned belong to (provided the domain specifies some pinning in the config file!). So, something like the below, sssuming CPUs #0 to #3 belongs to the same NUMA node, will ensure the domain will get all its vCPUs and memory from that node:

... vcpus = '4' memory = '1024' cpus = "0-3" ...

This is quite effective, to the point that, starting from Xen 4.2, both the XenD and libxl/xl based toolstacks, if there is no vCPU pinning in the config file, try to figure it out on its own, and pin the vCPUs there!

Automatic NUMA Placement

If no cpus="..." option is specified in the config file, Xen tries to figure out on its own on which node(s) the domain could fit best. It is worthwhile noting that optimally fitting a set of VMs on the NUMA nodes of an host is an incarnation of the Bin Packing Problem. As such problem is known to be NP-hard, we will be using some heuristics.

So, among the node (or set of nodes) that have enough free memory and enough physical CPUs to accommodate the domain, the one with the smallest number of vCPUs already running there is chosen. The domain is the placed there, just by pinning all its vCPUs to all the pCPUs belonging to the node itself.

Automatic Placement in xl

In some more details, if using libxl (and, of course, xl), and Xen >= 4.2, the NUMA automatic placement works as follows. First of all the nodes (or the sets of nodes) that have enough free memory and enough pCPUs are found. The idea is to find a spot for the domain with at least as much free memory as it has configured to have, and as much pCPUs as it has vCPUs. After that, the actual decision on which node to pick happens accordingly to the following heuristics:

- in case more than one node is necessary, solutions involving fewer nodes are considered better. In case two (or more) suitable solutions span the same number of nodes,

- the node(s) with a smaller number of vCPUs runnable on them (due to previous placement and/or plain vCPU pinning) are considered better. In case the same number of vCPUs can run on two (or more) node(s),

- the node(s) with with the greatest amount of free memory is considered to be the best one.

As of now (Xen 4.2) there is no way to interact with the placement mechanism, for example for modifying the heuristic it is driven by. Of course, if vcpu pinning or cpupools are manually setup (look at the Tuning page), no automatic placement will happen, and the user's requests are honored.

Future Developments

Preliminary patches introducing support for automatic placement in xl and NUMA-aware scheduling were been posted to the xen-devel mailing list a while back. The results of some (preliminary as well, of course) benchmarks have been discussed in this and this Blog posts (and in this and this Wiki articles).

Xen 4.2 contains a slightly modified version of that placement algorithm, more specifically, the one implemented by this patch series. Keeping improving it, as well as continuing adding NUMA related features will happen throughout the Xen 4.3 development cycle (so keep yourself posted!).