Understanding the Virtualization Spectrum

| Incorporates this great article by Brendan Gregg which cleans up some of our historical terminology |

| PV |

| Paravirtualization |

| PVHVM |

| PVHVM |

| Xen Linux PVHVM Drivers |

| Windows PVHVM Drivers |

| PVHVM Drtivers HowTo |

| PVH |

| Presentation |

| Video |

If you know anything about Xen Project, you have probably heard terms like PV, HVM, PVHVM, and PVH. This can be very confusing. But, with a little explanation, it is possible to understand the what all these terms mean without suffering an aneurism.

Full Virtualization

In the early days of virtualization (at least in the x86 world), the assumption was that you needed your hypervisor to provide a virtual machine that was functionally nearly identical to a real machine. This included the following aspects:

- Disk and network devices

- Interrupts and timers

- Emulated platform: motherboard, device buses, BIOS

- “Legacy” boot: i.e., starting in 16-bit mode and bootstrapping up to 64-bit mode

- Privileged instructions

- Pagetables (memory access)

In the early days of x86 virtualization, all of this needed to be virtualized: disk and network devices needed to be emulated, as did interrupts and timers, the motherboard and PCI buses, and so on. Guests needed to start in 16-bit mode and run a BIOS which loaded the guest kernel, which (again) ran in 16-bit mode, then bootstrapped its way up to 32-bit mode, and possibly then to 64-bit mode. All privileged instructions executed by the guest kernel needed to be emulated somehow; and the pagetables needed to be emulated in software.

This mode, where all of the aspects the virtual machine must be functionally identical to real hardware - is called "fully virtualized mode" or "hardware virtualized mode" and abbreviated HVM.

Xen Project and Paravirtualization

Unfortunately, particularly for x86, virtualizing privileged instructions is very complicated. Many instructions for x86 behave differently in kernel and user mode without generating a trap, meaning that your options for running kernel code were to do full software emulation (incredibly slow) or binary translation (incredibly complicated, and still very slow).

The key question of the original Xen Project research project at Cambridge University was, “What if instead of trying to fool the guest kernel into thinking it’s running on real hardware, you just let the guest know that it was running in a virtual machine, and changed the interface you provide to make it easier to implement?” To answer that question, they started from the ground up designing a new interface designed for virtualization. Working together with researchers at both the Intel and Microsoft labs, they took both Linux and Windows XP, and ripped out anything that was slow or difficult to virtualize, replacing it with calls into the hypervisor (hypercalls) or other virtualization-friendly techniques. (The Windows XP port to Xen 1.0, as you might imagine, never left Microsoft Research; but it was benchmarked in the original paper.)

The result was impressive — by getting rid of all the difficult legacy interfaces, they were able to make a fast, very lightweight hypervisor in under 70,000 lines of code.

This technique of changing the interface to make it easy to virtualize they called paravirtualization (PV). In a paravirtualized VM, guests run with fully paravirtualized disk and network interfaces; interrupts and timers are paravirtualized; there is no emulated motherboard or device bus; guests boot directly into the kernel in the mode the kernel wishes to run in (32-bit or 64-bit), without needing to start in 16-bit mode or go through a BIOS; all privileged instructions are replaced with paravirtualized equivalents (hypercalls), and access to the page tables was paravirtualized as well.

Xen Project and Full Virtualization

In early versions of Xen Project, paravirtualization was the only mode available. Although Windows XP had been ported to the Xen Project platform, it was pretty clear that such a port was never going to see the light of day outside Microsoft Research. This meant, essentially, that only open-source operating systems were going to be able to run on our hypervisor.

At the same time the Xen Project team was coming up with paravirtualization, the engineers at Intel and AMD were working to try to make full virtualization easier. The result was something we now call HVM — which stands for “hardware virtual machine”. Rather than needing to do software emulation or binary translation, the HVM extensions do what might be called “hardware emulation”.

Technically speaking, HVM refers to a set of extensions that make it much simpler to virtualize one component: the processor. To run a fully virtualized guest, many other components still need to be virtualized. To accomplish this, the Xen Project integrated qemu to emulate disk, network, motherboard, and PCI devices; wrote the shadow code, to virtualize the pagetables; wrote emulated interrupt controllers in Xen; and integrated ROMBIOS to provide a virtual BIOS to the guest.

Even though the HVM extensions are only one component of making a fully virtualized VM, the “fully virtualized” mode in the hypervisor was called HVM mode, distinguishing it from PV mode. This usage spread throughout the toolstack and into the user interface; to this day, users generally speak of running a VM in “PV mode” or in “HVM mode”.

Once you have a fully-virtualized system, the first thing you notice is that the interface you provide for network and disks — that is, emulating a full PCI card with MMIO registers and so on — is really unnecessarily complicated. Because nearly all modern kernels have ways to load third-party device drivers, it’s a fairly obvious step to create disk and network drivers that can use the paravirtualized interfaces. Running in this mode can be called fully virtualized with PV drivers.

From Poles to a Spectrum

Xen Project may have started with two polar modes, but it has progressed to a spectrum which progresses between the end points of Paravirtualization and Full Virtualization.

This spectrum has evolved over time. It arose when people began to realize that both polees were deficient when it came to performance. Over time, the project's engineers developed enhancements which were aimed at improving the overall performance. These enhancements borrow concepts from the opposite pole to create hybrids which come closer and closer to the optimal mode.

Enhancements

Enhancements to HVM:

HVM with PV drivers:

Fully virtualized network and disk drivers have to look and act like real hardware. Data has to be packed the way the real hardware would expect it, even if the data won't actually pass over the device. That's not as efficient as the simple structures employed in Paravirtualized drivers. So, to make HVM faster, Xen Project provides a way to employ special network and disk drivers on guests which use efficient PV drivers on the back end. It's an incremental step toward higher performance.

PVHVM:

But fully virtualized mode, even with PV drivers, has a number of things that are unnecessarily inefficient. One example is the interrupt controllers: fully virtualized mode provides the guest kernel with emulated interrupt controllers (APICs and IOAPICs). Each instruction that interacts with the APIC requires a trip up into Xen and a software instruction decode; and each interrupt delivered requires several of these emulations.

As it turns out, many of the the paravirtualized interfaces for interrupts, timers, and so on are actually available for guests running in HVM mode; they just need to be turned on and used. The paravirtualized interfaces use memory pages shared with Xen, and are streamlined to minimize traps into the hypervisor.

So Stefano Stabellini wrote some patches for the Linux kernel that allowed Linux, when it detects that it’s running in HVM mode under Xen, to switch from using the emulated interrupt controllers and timers to the paravirtualized interrupts and timers. This mode he called PVHVM mode, because although it runs in HVM mode, it uses the PV interfaces extensively.

Enhancements to PV:

PVH:

A lot of the choices Xen Project made when designing a PV interface were made before HVM extensions were available. Nearly all hardware now has HVM extensions available, and nearly all also include hardware-assisted pagetable virtualization. What if we could run a fully PV guest — one that had no emulated motherboard, BIOS, or anything like that — but used the HVM extensions to make the PV MMU unnecessary, as well as to speed up system calls in 64-bit mode?

This is exactly what PVH mode is. It’s a fully PV kernel mode, running with paravirtualized disk and network, paravirtualized interrupts and timers, no emulated devices of any kind (and thus no qemu), no BIOS or legacy boot — but instead of requiring PV MMU, it uses the HVM hardware extensions to virtualize the pagetables, as well as system calls and other privileged operations.

We fully expect PVH to have the best characteristics of all the modes — a simple, fast, secure interface, low memory overhead, while taking full advantage of the hardware. If HVM had been available at the time the Xen hypervisor was designed, PVH is probably the mode we would have chosen to use. In fact, in the new ARM Xen port, it is the primary mode that guests will operate in.

Once PVH is well-established (perhaps five years or so after it’s introduced), we will probably consider removing non-PVH support from the Linux kernel, making maintenance of Xen support for Linux much simpler. The Xen kernel will probably support older kernels for some time after that. However, rest assured that none of this will be done without consideration of the community.

Given the number of other things in the fully virtualized – paravirtualized spectrum, finding a descriptive name has been difficult. The developers have more or less settled on “PVH” (mainly PV, but with a little bit of HVM), but it has in the past been called other things, including “PV in an HVM container” (or just “HVM containers”), and “Hybrid mode”.

What to Choose?

The first step is to identify which pole (PV or HVM) to start from. The rules of thumb for choosing is:

- PV: Use this if the PVH feature is supported, which boots the best type of hybrid. You may also need to choose this if the processor doesn't do HVM: eg, following the EC2 instance type matrix, where you will end up with a fully PV mode instance.

- HVM: Until PVH is ready for production work, this is the preferred mode. Xen Project and the guest kernel are likely to support a number of enhancements which use Xen Project's PV code paths, so the booted instance will be a hybrid. The degree to which the hybrid approaches optimal function will depend on the number of enhancements supported in the hardware. In HVM, later hardware means support for more enhancements; so if you want the best results, use more recent hardware.

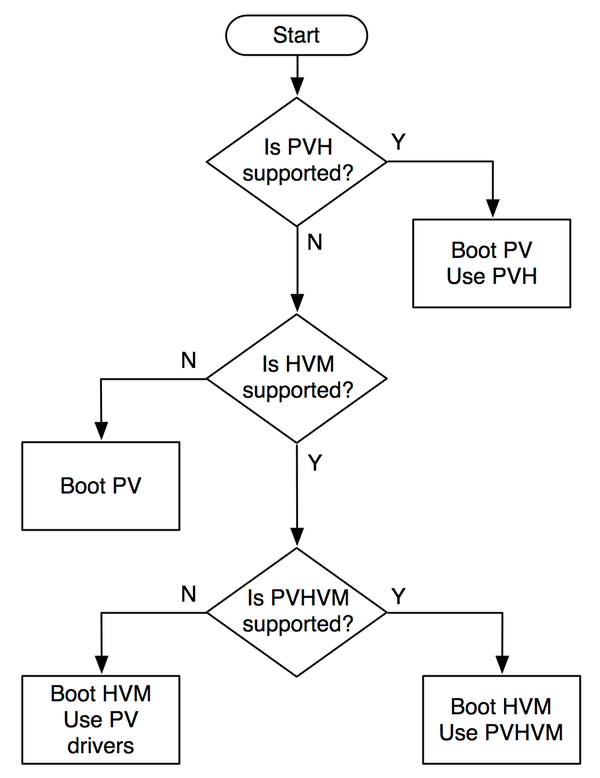

The flowchart below, devised by Brendan Gregg, shows the method for choosing the optimal performance selection for most situations. While it is possible that some people may have edge cases which may require a slightly different selection, most people will find their way to the top of the performance heap just by following this simple logic flow:

PVHVM and PVH can be thought of as features and not modes. The choices are PV or HVM, which describes how the instance boots, and your choice may be influenced by the features available. Currently, on EC2, PVH is not available, but PVHVM is: so the best choice for Linux (in general) would be booting a "HVM" instance with PVHVM enabled (eg, setting CONFIG_XEN_PVHVM).

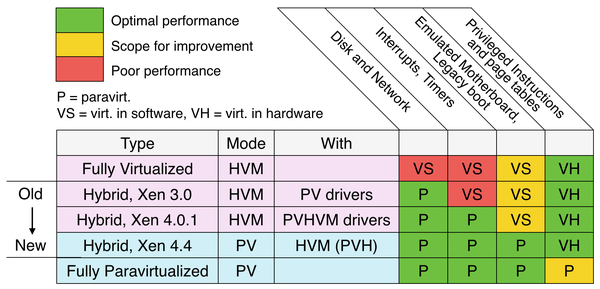

I've redrawn the spectrum picture on the right to emphasize the two modes, and to group all mixed types as "Hybrid", showing that they are a progression of Xen versions, rather than a group of modes to choose from. Is this helpful?

If you don't find this an improvement, I'll try a different way to clarify what your Xen guest is doing in my next post, where I'll cover tools for feature detection. Enhanced Networking

While my spectrum diagram may be an improvement, I've left a loose end untied: SR-IOV for enhanced networking. If the instance type supports it, this uses hardware virtualization enhancements to improve performance and provide an interface better suited for virtualization. In some ways, this is an evolution of I/O controllers that is similar to what processors have done, with their own virtualization extensions.

SR-IOV, if you can enable it, should provide the best I/O performance. So perhaps the first feature column in the spectrum diagram should really show two options: P (paravirt) as yellow, and VH (SR-IOV) as green. Here's how it looks (although I'm not sure all the hybrid types support SR-IOV).

Problems with paravirtualization: AMD and x86-64

It comes as a surprise to many people that while 32-bit paravirtualized guests in Xen are faster than 32-bit fully virtualized guests, when running in 64-bit mode, paravirtualized guests can sometimes be slower than fully virtualized guests. This is due to some changes AMD made when designing the architecture which simplified things for them, but made things more difficult for Xen.

Most modern operating systems need just two levels of protection: user mode and kernel mode. Kernel mode memory is protected from user mode memory via the pagetable “supervisor mode” bit.

When running a virtual machine, you need at least three levels of protection: user mode, guest kernel, and hypervisor. The hypervisor memory needs to be protected from the guest kernel, and the guest kernel memory needs to be protected from the user. The pagetable protections only provide two levels of protection, so Xen uses another processor feature, called a segmentation limit, to provide the third level of protection. Segmentation limits are a processor feature that was in common use before paging was available. But since paging has been available, segmentation limits have basically not been used; so Xen was able to commandeer them to provide the extra level of necessary protection. The pagetable protections protect both the guest kernel and Xen from userspace; the segmentation limits protect Xen from the guest kernel.

Unfortunately, at the time that Xen team was developing clever new uses for this little-used feature, AMD was designing their 64-bit extensions to the x86 architecture. Any unused processor feature makes hardware much more complicated to design, reason about, and verify. Since basically no operating systems use segmentation limits, AMD decided to get rid of them.

This may have greatly simplified the architecture for AMD, but it made it impossible for Xen to squeeze in 3 levels of protection into the same address space. Instead, for 64-bit PV guests, both guest kernel and guest user-space need to run in ring 3, each with their own address space. Every time a guest process needs to make a system call, it has to bounce up into Xen, which will context-switch to the guest kernel. This not only takes more time for each system call, but requires flushing one of the key CPU caches, called a TLB. Frequent flushing of the TLB causes all execution to run more slowly for some time afterwards, as the TLB is filled up again.

In 64-bit HVM mode, the problem doesn’t occur. The HVM extensions make it easy to have three different protection levels without needing to play clever tricks with little-used processor features. So making system calls in 64-bit HVM mode is just as fast as on real hardware. For this reason, a lot of people began running 64-bit Linux in fully virtualized mode.

Problems with paravirtualization: Linux and the PV MMU

PVHVM mode allows 64-bit guests to run at near native speed, taking advantage of both the hardware virtualization extensions and the paravirtualized interfaces of Xen. But it still leaves something to be desired. For one, it still requires the overhead of an emulated BIOS and legacy boot. Secondly, it requires the extra memory overhead of a qemu instance to emulate the motherboard and PCI devices. For this reason, memory-conscious or security-conscious users may opt to use 64-bit PV anyway, even if it is somewhat slower.

But there is one PV guest that can never be run in PVHVM mode, and that is domain 0. Because having a domain 0 with the current Linux drivers will always be necessary, it will always be necessary to have a PV mode in the Linux kernel.

But what’s the problem, you ask? Weren’t all of the features necessary to run Linux as a dom0 upstreamed in Linux 3.0?

Yes, they were; but they are still occasionally the source of some irritation. The core changes required to paravirtualize the page tables (also known as the “PV MMU”) are straightforward and work well once the system is up and running. However, while the kernel is booting, before the normal MMU is up and running, the story is a bit different. The changes required for the early MMU are fragile, and are often inadvertently broken when making seemingly innocent changes. This makes both the x86 maintainers and the pvops maintainers unhappy, consuming time and emotional energy that could be used for other purposes.

The paravirtualization spectrum

So to summarize: There are a number of things that can be either virtualized or paravirtualized when creating a VM; these include:

Disk and network devices Interrupts and timers Emulated platform: motherboard, device buses, BIOS, legacy boot Privileged instructions and pagetables (memory access)

Each of these can be fully virtualized or paravirtualized independently. This leads to a spectrum of virtualization modes, summarized in the table below:

The first three of these will all be classified as “HVM mode”, and the last two as “PV mode” for historical reasons. PVH is the new mode, which we expect to be a sweet spot between full virtualization and paravirtualization: it combines the best advantages of Xen’s PV mode with full utilization of hardware support.

Hopefully this has given you an insight into what the various modes are, how they came about, and what are the advantages and disadvantages of each.